Source: The Conversation – USA (2) – By Ahmed Elgammal, Professor of Computer Science and Director of the Art & AI Lab, Rutgers University

OpenAI officially discontinued its video generation tool, Sora, on April 26, 2026.

I’m a computer scientist who’s been developing AI tools and studying their evolution and adoption for the past decade, and I wasn’t surprised by OpenAI’s decision to shut down Sora.

To me, the challenges Sora faced reflect deeper limitations of AI’s creative capacities that are becoming harder to ignore.

Problems from the start

OpenAI unveiled Sora on Feb. 15, 2024, as an AI tool that gave users the ability to create short videos from text prompts. To pull this off, the technology essentially predicted how images would change from frame to frame based on what it had “learned” from millions of hours of existing footage.

But from the start, there were problems with it.

First, Sora was expensive to run. Generating video requires far more computing power than creating text or images, making it challenging for OpenAI to keep costs under control. Nor was it bringing in enough revenue to justify those costs, especially compared with other AI products that are cheaper to operate and easier to monetize. According to The Wall Street Journal, Sora was losing US$1 million per day.

Second, the early hype – TechPowerUp declared Sora the “Text-to-Video AI Model Beyond Our Wildest Imagination” – didn’t seem to translate into lasting engagement. After the initial buzz faded, users seemed to struggle to find consistent, practical uses for the technology.

Finally, tools like Sora exist in a legal gray area, where concerns about copyright and ownership of visual content force companies into a cautious, defensive stance. In practice, this has meant strict prompt controls that prevent references to copyrighted characters or films; blocking outputs that look like living people or intellectual property; and establishing legal safeguards, such as watermarks and metadata tags, on outputs.

Put together, these challenges likely forced OpenAI to redirect its resources elsewhere, especially as competition across the AI industry has intensified.

A symptom of larger issues

But there’s also a pattern that isn’t unique to Sora’s failure to thrive.

Many generative AI programs geared toward creative fields have encountered a common problem: rapid initial adoption, followed by declining sustained engagement.

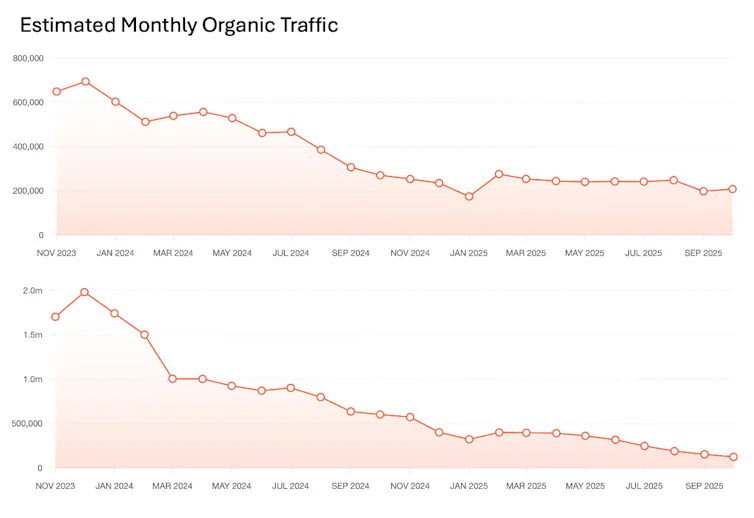

Many users appear to try image and video generation tools like Midjourney and Stability AI out of curiosity. But if stagnating traffic data is any indication, few creative professionals seem to be integrating them into their regular workflows.

Ahmed Elgammal/Ubersuggest

OpenAI and other companies rolled out prompt-based image and video tools with the hope that the efficiency of their product would provide an attractive alternative to the time-consuming process of producing films, photographs and graphic design. Instead of spending a lot of time and money filming a video, you could simply write a prompt, and AI – trained on billions of pieces of human-generated content – would render it for you.

Generative AI’s counter-creative bias

So what happened?

AI-generated outputs of text and images can look impressively real. The bots seem to follow instructions well and appear to give users control.

But there’s an important catch. Under the hood, these systems are built to imitate what they’ve already seen, and that’s especially the case for images and videos. They’ve been trained on massive collections of visual data and rewarded for producing results that closely match the patterns contained in those visuals. That’s why the outputs can look so realistic and recognizable.

Because they’re optimized to produce familiar outputs, they end up suppressing novelty. This, it goes without saying, doesn’t lend itself to true creative breakthroughs. Even the benchmarks used by researchers to evaluate the performance of such systems tend to favor outputs that look “right,” rather than those that truly shatter expectations or take an image to the next level.

Furthermore, these systems don’t learn from a vast repository of data that encompasses the visual world and all human artistic outputs. Instead, the data used to train these models has often been curated to favor certain images and videos that are polished, clear and visually appealing. In effect, the training process teaches models not just what things look like, but what good-looking content is supposed to be.

In a recent paper, I highlighted this problem, which I call the “counter-creative bias” – the tendency of these systems to favor familiarity over meaningful novelty.

Counter-creative bias explains why so many AI-generated images and videos, even when they vary in subject or style, end up sharing a similar look and feel. And I think it explains why so many artists and other creatives don’t seem to be widely adopting these tools. Good creative work involves pushing boundaries, not simply coming up with something that’s passable and palatable.

The limits of prompting

There’s another problem with these tools.

When someone uses AI to generate an image or a video via a prompt, they’re already operating within the constraints of language.

An artist who wishes to use AI has to learn how to write elaborate prompts with the right keywords that compel the system to generate the desired composition, colors, lighting and aesthetics. To create an interesting image or a video, you have to cleverly manipulate words, combine odd concepts and deploy metaphors. It’s an entirely different skill set.

This was obvious from the beginning. When OpenAI launched DALL-E 2 in July 2022, the company demonstrated the range of interesting images by using crafted prompts like “an espresso machine that makes coffee from human souls” or “panda mad scientist mixing sparkling chemicals.”

The sources of creativity in these examples were the human-written prompts themselves, not how the AI generated the image. To make something visually creative, you have to become clever at manipulating words. Users are forced to fiddle with any number of prompt variations to reach a desired or even satisfactory result.

Wading through the slop

There’s a reason Merriam-Webster and the American Dialect Society chose “slop” as their 2025 words of the year: The internet is brimming with viral AI-generated images of world leaders and wide-eyed children, designed to coax engagement but bereft of creative value. The counter-creative bias inherent to these models is reflected in the fact that many people are becoming accustomed to an AI aesthetic characterized by hyper-polished, well-lit, perfectly composed, generically pretty images.

There was a time when AI art was seen as a burgeoning form of conceptual art.

In the summer of 2019, London’s Barbican Centre included AI art in its exhibition, “AI: More Than Human.” In November of that year, the National Museum of China in Beijing showcased 120 AI-integrated artworks, which were viewed by over 1 million people. I championed some of the artists incorporating this new technology into their work.

Back then, creating art with AI involved constant experimentation. The AI these artists used hadn’t been trained on billions of copyrighted, curated images from the internet. Instead, artists trained AI models using their own images and inspiration, while AI was allowed to manipulate pixels free of any language constraints. No universal aesthetic emerged; every AI artist seemed to come up with something unique, and their existing artistic identity shined through the medium, rather than becoming overshadowed by it.

That hopeful period appears to be over. Once pixels had to be rendered through the control of language, I think it hampered its potential as an artistic medium. And now we’re left with a technology that seems best suited for memes, spam, deepfakes and porn.

![]()

Ahmed Elgammal does not work for, consult, own shares in or receive funding from any company or organization that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment.

– ref. Sora’s downfall signals broader problems with AI’s creative utility – https://theconversation.com/soras-downfall-signals-broader-problems-with-ais-creative-utility-280013