Source: The Conversation – Canada – By Billie Anderson, Lecturer, Disability Studies, King’s University College, Western University

Care work structures much of everyday life, yet it often remains invisible. It’s folded into assumptions about love, responsibility and familial duty rather than recognized as labour.

Nowhere is this more apparent than in caregiving, particularly when it’s performed by mothers. They’re routinely expected to absorb care work quietly, competently and without visible cost, even when that work unfolds under conditions of chronic illness, disability or grief.

Film and television often reinforce this expectation by presenting caregiving as a moral achievement rather than a social obligation. These representations frame maternal endurance as proof of love, virtue and emotional strength.

In recent cinema, motherhood shaped by loss or threat has emerged as a central narrative concern, from Hamnet to The Testament of Ann Lee to Sinners. Each film, in its own way, pushing back against the longstanding cultural fantasy of motherhood as a site of moral purity, endurance and heroism.

Rather than asking audiences to admire maternal sacrifice, these films linger on grief, struggle and ambivalence, exposing how expectations of care and emotional stability are unevenly distributed and disproportionately borne by women.

Care as a source of grief and harm

Mary Bronstein’s 2025 film, If I Had Legs I’d Kick You belongs to this broader turn, but it pushes the critique further by stripping caregiving of even the residual comforts that often remain.

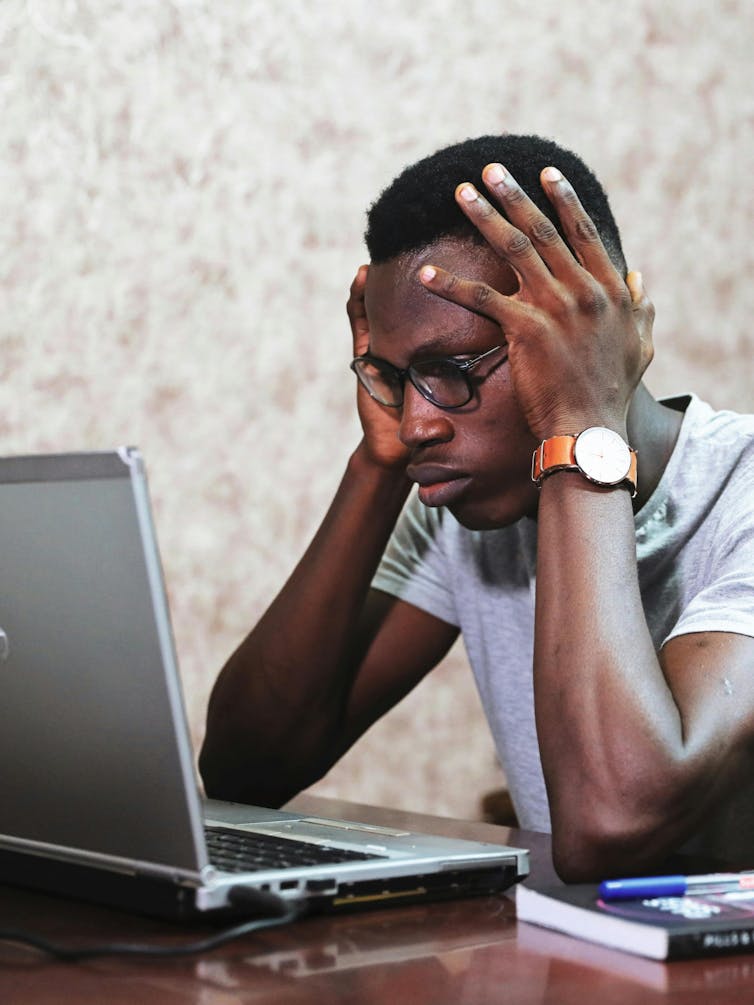

The film follows Linda (Rose Byrne, nominated for Best Actress at this year’s Academy Awards) as she navigates the daily, grinding reality of caring for her young child as serious illness reshapes every aspect of their lives, from medical appointments and disrupted routines to the emotional toll of uncertainty and constant vigilance.

The film refuses to frame care as redemptive, and instead shows how care, when imagined as an unlimited personal resource, becomes a source of depletion, grief and harm. Linda’s life revolves around her child’s needs — schedules dictated by medical systems, emotional energy consumed by anticipation and fear and moments of solitude repeatedly interrupted by responsibility — without any suggestion it’s a temporary, chosen or ultimately meaningful situation.

If I Had Legs I’d Kick You is not a story about maternal devotion. It’s a movie about how illness and disability are folded into private family life and how mothers are positioned as the shock absorbers of systems unwilling to provide adequate structural support.

Even when doctors, therapists, institutions and procedures surround her, the film makes clear that the emotional and logistical labour of care ultimately collapses back onto Linda herself.

As in real life, it’s left to the mother to internalize and dismantle shame, to make every consequential decision in the absence of adequate support and then to absorb judgment for each of those decisions. Mothers are asked to account morally for outcomes shaped by systems they cannot control.

‘Privatization of care’

Within disability studies and feminist ethics, care has long been understood as a social and political arrangement shaped by power, gender and access to resources.

Political theorist Joan Tronto argues that care is systematically devalued when it’s treated as private, feminized and morally natural — something women are presumed to provide instinctively — rather than as labour that must be collectively organized, supported and fairly distributed across society.

If I Had Legs I’d Kick You makes this devaluation visible in the way care is repeatedly pushed out of institutions and back onto Linda. In several scenes set within medical environments, professionals deliver information, outline procedures or ask Linda to make decisions, only to disappear once those moments conclude. This leaves her alone to manage the emotional aftermath, the logistical follow-through and the fear those decisions produce.

(VVS Films)

The systems of care remain present only as brief interventions, while the ongoing labour — monitoring her child’s symptoms, anticipating emergencies, soothing anxiety, reorganizing daily life — is treated as a natural extension of motherhood rather than as work that might require sustained support.

These scenes embody what Tronto describes as the privatization of care: institutions retain authority and expertise, but responsibility is quietly transferred to the individual caregiver, who is expected to absorb the costs without complaint.

Philosopher Eva Kittay extends this critique by focusing on dependency and the economic structures that rely on care while refusing to sustain those who perform it.

Kittay emphasizes that modern social and economic systems depend on vast amounts of unpaid or underpaid care work — much of it performed by women — while offering little material, emotional or social support in return.

Managing alone

In scenes where Linda juggles medical co-ordination alongside the ordinary and extraordinary demands of daily life, the film makes visible how care consumes every register of her attention.

She fields calls from doctors while continuing her own work as a therapist, absorbs the emotional crises of her patients even as she is barely holding herself together and navigates the instability of temporary housing after her roof collapses, forcing the family into a hotel.

(VVS Films)

Her husband is frequently away for work, leaving her to manage both the logistical and emotional labour of care alone, while the film also insists on the banal realities of parenting: her child is sick but her child is also simply a child, needing comfort, discipline, patience and play.

In one moment she is trying to get her own child to eat enough to remove her feeding tube; in another she is unexpectedly left responsible for a stranger’s baby, her capacity for care assumed and exploited without question. Care here is an uninterrupted state of readiness, a demand that stretches across professional, domestic and emotional life without pause.

Popular culture frequently relies on the figure of the “good” mother: selfless, patient and endlessly resilient. Disability narratives often reinforce this ideal by positioning caregiving as proof of moral worth.

Flipping the narrative

By declining to transform suffering into inspiration, If I Had Legs I’d Kick You challenges the expectation that caregiving should make someone better, stronger or more fulfilled. Care here does not ennoble; it depletes. Crucially, this depletion is not framed as personal failure, but as the predictable outcome of systems that rely on mothers to absorb care work without adequate social, economic or emotional support.

Many films use disability as a narrative device, positioning it as a challenge that generates growth or moral clarity in others.

If I Had Legs I’d Kick You, by contrast, represents a decisive step toward undoing this familiar storytelling structure. The child’s illness does not exist to transform the mother or to provide emotional payoff for the audience. Disability is neither a lesson nor a catalyst; it is part of the family’s reality, shaping daily life in ways that are mundane, exhausting and deeply consequential.

Read more:

Women caregivers need more support to manage their responsibilities and well-being

By resisting sentimentality and refusing easy resolution — and by centring a protagonist who is allowed to be abrasive, overwhelmed, selfish and at times difficult to like — If I Had Legs I’d Kick You offers a rare and necessary portrayal of caregiving under conditions of illness and disability.

The film does not promise healing or redemption. It insists on honesty about grief that lingers, care that depletes and the impossible expectations placed on those who are expected to hold everything together.

![]()

Billie Anderson does not work for, consult, own shares in or receive funding from any company or organisation that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment.

– ref. What ‘If I had Legs I’d Kick You’ tells us about mothering and thankless sacrifice – https://theconversation.com/what-if-i-had-legs-id-kick-you-tells-us-about-mothering-and-thankless-sacrifice-274074