Source: The Conversation – in French – By Gordon Osinski, Professor in Earth and Planetary Science, Western University

La dernière mission Apollo s’est déroulée il y a 54 ans, et les humains ne se sont pas aventurés au-delà de l’orbite terrestre basse depuis ce temps. Cette situation est toutefois sur le point de changer avec le lancement de la mission Artemis II qui doit avoir lieu le mois prochain au Centre spatial Kennedy, en Floride.

L’agence spatiale américaine a annoncé que le lancement d’Artemis II, initialement prévu le 8 février, était reporté au 6 mars en raison d’une fuite d’hydrogène liquide découverte lors de la répétition générale du 3 février.

Il s’agira du premier vol habité du programme Artemis de la NASA, ainsi que de la première fois que des êtres humains s’aventureront vers la Lune depuis 1972. L’astronaute canadien Jeremy Hansen sera à bord. Il sera le premier non-Américain à voler jusqu’à la Lune, faisant du Canada le deuxième pays à envoyer un astronaute dans l’espace lointain.

À lire aussi :

Les innovations spatiales canadiennes sont essentielles aux missions Artemis

La NASA a annoncé qu’elle reportait le lancement d’Artemis II à la fenêtre de lancement de mars, qui commence le 6 mars, en raison d’une fuite d’hydrogène liquide découverte lors de la répétition générale.

Je suis professeur, explorateur et astrogéologue. Depuis 15 ans, je contribue à la formation de Hansen et d’autres astronautes en géologie et en sciences planétaires. Je suis également membre de l’équipe scientifique d’Artemis III et chercheur principal de la toute première mission canadienne d’exploration lunaire avec une astromobile.

(NASA)

Quelle est la mission d’Artemis ?

Le programme Artemis de la NASA, commencé en 2017, a un objectif ambitieux : celui de retourner sur la Lune et d’y établir une base afin de préparer l’envoi d’êtres humains sur Mars. Le lancement de la première mission, Artemis I, a eu lieu fin 2022. Après quelques retards, celui d’Artemis II est prévu en mars.

Hansen et ses trois coéquipiers américains en feront partie.

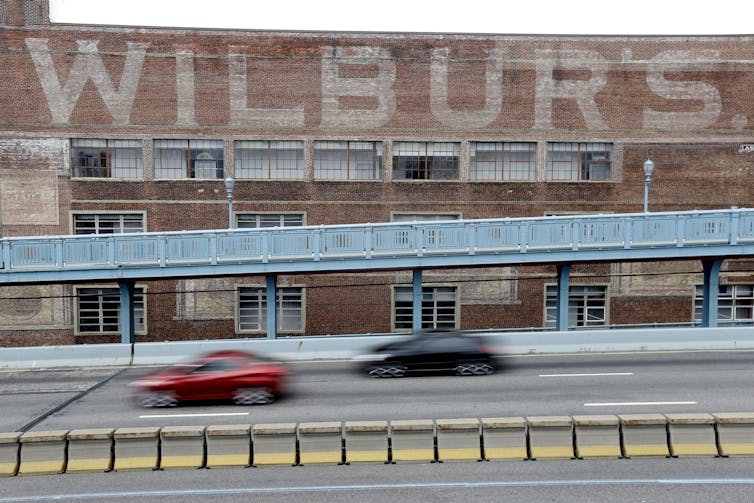

Il s’agit d’une mission particulièrement exaltante. Artemis II marquera le premier lancement d’êtres humains à l’aide du gigantesque lanceur SLS (Système de lancement spatial) de la NASA, ainsi que le premier vol d’humains à bord du vaisseau spatial Orion.

La fusée SLS est la plus puissante jamais construite par la NASA, avec la capacité de lancer plus de 27 tonnes métriques de charges utiles (équipements, instruments, matériel scientifique et cargaison) sur la Lune. Le vaisseau spatial Orion se trouve au sommet et transportera l’équipage vers la Lune. Le nom choisi par les membres de l’équipage pour sa capsule, Integrity, reflète, selon eux, les valeurs de confiance, de respect, de franchise et d’humilité.

(NASA)

Que fera l’équipage d’Artemis II dans l’espace ?

Après le lancement, l’équipage procédera à des tests des systèmes de survie d’Integrity : le distributeur d’eau, l’équipement de lutte contre les incendies et, bien sûr, les toilettes. Saviez-vous qu’il n’y avait pas de toilettes lors des missions Apollo ? À la place, les équipages utilisaient des « tuyaux sanitaires ».

Si tout va bien, Artemis II allumera ce qu’on appelle l’étage de propulsion cryogénique provisoire (Interim Cryogenic Propulsion Stage), une partie de la fusée SLS toujours reliée à Integrity, afin d’élever l’orbite du vaisseau spatial. Si tout continue à bien fonctionner, Orion et ses quatre astronautes demeureront 24 heures en orbite haute, à une distance pouvant atteindre 70 000 kilomètres de la planète.

À titre de comparaison, la Station spatiale internationale orbite à seulement 400 kilomètres de la Terre.

Après une série de tests et de vérifications, l’équipage procédera à une des étapes les plus critiques de la mission : l’insertion translunaire (ITL). Il s’agit du moment où le vaisseau spatial passe d’une orbite terrestre, d’où il pourrait facilement revenir sur Terre, à une trajectoire vers la Lune et l’espace lointain.

(NASA)

Une fois qu’Integrity est en route vers la Lune après l’ITL, il n’y a plus de retour en arrière possible, du moins pas sans se rendre d’abord jusqu’à la Lune. À l’instar des premières missions Apollo, Artemis II entrera alors dans ce qu’on appelle une « trajectoire de retour libre ». Même si les moteurs d’Integrity tombaient complètement en panne, la gravité de la Lune ferait naturellement tourner le vaisseau spatial autour d’elle et le redirigerait vers la Terre.

À lire aussi :

L’exploration spatiale n’est pas un luxe. Elle est nécessaire

Après trois jours de voyage vers la Lune, l’équipage entamera la phase la plus passionnante de la mission : le survol de la Lune. Integrity contournera la face cachée de la Lune, passant à une distance comprise entre 6 000 et 10 000 kilomètres au-dessus de sa surface, soit bien plus loin de la Terre que toutes les missions Apollo.

Déjà des milliers d’abonnés à l’infolettre de La Conversation. Et vous ? Abonnez-vous gratuitement à notre infolettre pour mieux comprendre les grands enjeux contemporains.

S’inspirant d’un titre de Star Trek, on peut dire que, à ce stade, l’équipage d’Artemis II aura voyagé là où aucun humain n’est allé auparavant. Ce sera, littéralement, le point le plus éloigné de la Terre jamais atteint par un être humain.

L’exploration lunaire, un effort international

La présence d’un astronaute canadien dans l’équipage d’Artemis II témoigne de la nature collaborative et internationale du programme.

(NASA)

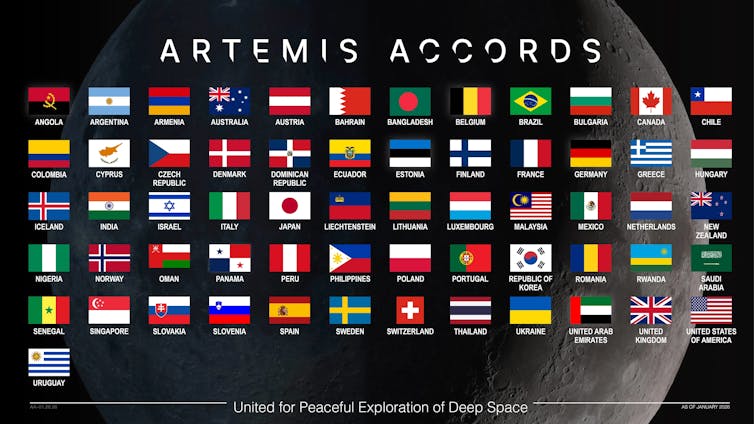

Si la NASA a créé le programme et en est le moteur, 61 pays ont signé les accords Artemis à ce jour.

Ces accords reposent sur la reconnaissance du fait que la coopération internationale dans le domaine spatial vise non seulement à renforcer l’exploration spatiale, mais aussi à améliorer les relations pacifiques entre les nations. Cette coopération est particulièrement nécessaire aujourd’hui, peut-être plus que jamais depuis la fin de la guerre froide.

J’espère sincèrement que lorsqu’Integrity reviendra de la face cachée de la Lune, les gens du monde entier prendront le temps, ne serait-ce que quelques instants, de réfléchir ensemble à un avenir meilleur. Bill Anders, qui a participé à la première mission Apollo habitée vers la Lune, a déclaré un jour :

Nous sommes venus explorer la Lune, et la chose la plus importante que nous ayons découverte est la Terre.

![]()

Gordon Osinski a fondé la société Interplanetary Exploration Odyssey Inc. Il reçoit des fonds du Conseil de recherches en sciences naturelles et en génie du Canada et de l’Agence spatiale canadienne.

– ref. Artemis II : la NASA lance bientôt la première mission habitée vers la Lune depuis 54 ans, avec un Canadien à bord – https://theconversation.com/artemis-ii-la-nasa-lance-bientot-la-premiere-mission-habitee-vers-la-lune-depuis-54-ans-avec-un-canadien-a-bord-275299