Source: The Conversation – USA (2) – By Yuanyuan (Gina) Cui, Assistant Professor of Marketing, Coastal Carolina University

Americans are skipping restaurant dinners, delaying car purchases and scouring for grocery deals. Amid tariff anxiety and broader stress over affordability, consumer confidence has dropped to levels not seen in over a decade, according to The Conference Board, a business think tank. At this point, it’s wealthier consumers who are powering the bulk of spending in the U.S. economy.

So what explains the success of Erewhon’s US$22 smoothie?

The Los Angeles grocery chain selling these fancy concoctions is doing so well, it opened three new stores in 2025 – its biggest expansion since 2011. The chain reportedly generates $1,800 to $2,500 in sales per square foot, up to five times what a typical U.S. supermarket earns.

These aren’t ordinary blended drinks; they include ingredients such as high-grade sea moss gel, adaptogenic mushrooms and collagen peptides. Often they come with a celebrity’s name attached.

It’s all part of the broader boom in the U.S. specialty food market, which has surpassed $219 billion – up nearly 150% in a decade, according to the Specialty Food Association. That far outpaces the roughly 47% growth seen in overall U.S. grocery sales over the same period.

Independent retail data from the market research firm Circana also confirms this growth: Even as inflation-weary consumers have traded down to store brands in many categories, premium and specialty products held up and even grew their dollar share of the market through 2025. On TikTok, creators who once filmed designer-bag hauls now post $12 tinned fish boards. Craft chocolate bars that cost $8–$12 are being marketed as, without irony, “self-care.”

So if consumers are this anxious, why are they still splurging? In fact, these aren’t contradictions – they’re two expressions of the same psychological reaction.

When people feel life is out of control, they reach for something small, expensive and signaling virtue. This is the real reason premium food is booming while some traditional luxury brands struggle, say consumer psychologists.

We are professors of consumer behavior and marketing who study how people make purchasing decisions amid economic uncertainty, and ask what explains the gap between how consumers feel and how they actually spend. Our work points to a consistent finding: When people feel they’ve lost control over the big things, they seek it in the small ones.

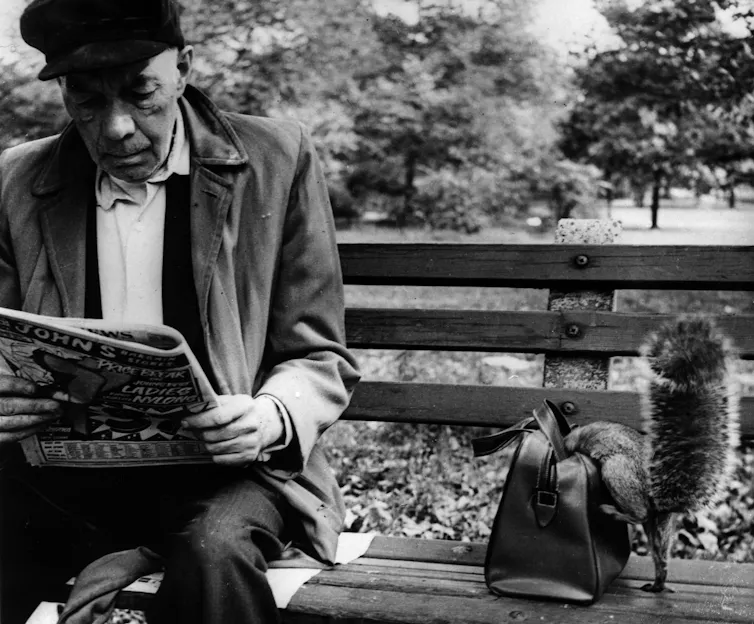

Dania Maxwell/Los Angeles Times via Getty Images

A quick detour through the makeup drawer

Economists have seen this before.

In 2001, Estée Lauder Chairman Leonard Lauder coined the term the “lipstick index” after he saw that lipstick sales rose 11% following the Sept. 11 attacks. When big luxuries feel out of reach, consumers find a small substitute. A $60 lipstick is extravagant for a cosmetic, but next to the Hermès handbag it psychologically replaces, it feels like a bargain.

Then, as now, people seek agency wherever they can find it. Consumer psychologists call this “compensatory consumption”: buying things to feel in control when life feels out of control.

While even beauty sales are softening, that impulse hasn’t disappeared. It has just found better hosts – such as food.

In many ways, food is an ideal product for this compensation. It’s experiential – something you taste, smell and savor. It’s also emotional – carrying associations with comfort, care and home. And it’s visible, because if you’re on social media, what you eat is now as public as what you wear. Premium food isn’t just eaten – it’s filmed, posted and performed.

Most importantly, it’s still relatively accessible. Twenty-two dollars may be an absurd price for a drink, but it’s cheap compared with a $400 wellness retreat.

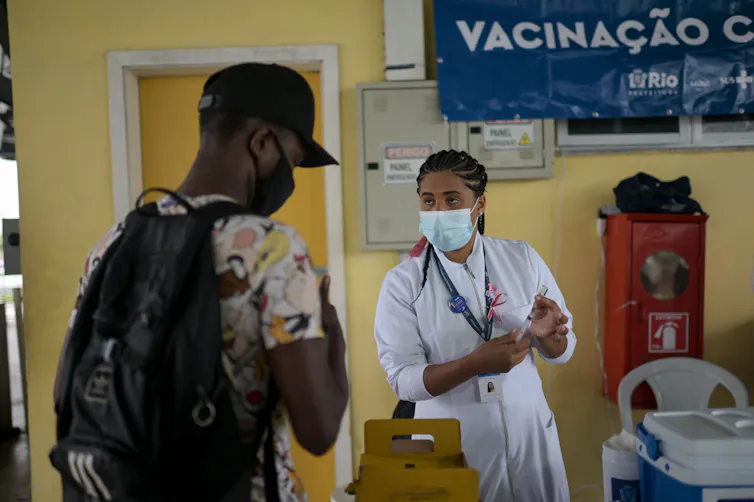

Sarah Reingewirtz/MediaNews Group/Los Angeles Daily News via Getty Images

Indulgence with a side of virtue

Here is what separates this moment from Lauder’s lipstick index. That example was purely about pleasure, as consumers sought indulgence as consolation. Today’s premium food purchases carry an additional layer: They are coded as virtuous.

An Erewhon smoothie isn’t just a treat. It’s organic, superfood-enriched and wellness-aligned. By the same logic, a $20 bottle of single-estate olive oil isn’t just cooking fat; it’s a commitment to craft and health. Premium tinned fish isn’t convenience food; it’s sustainably sourced protein caught in the wild with packaging beautiful enough to display.

This “virtue coding” does the most important psychological work in the sales transaction: It transforms indulgence into self-investment. You’re not splurging during a downturn; you’re doing something for your health. You’re not being frivolous; you’re supporting small producers. Research shows that people need reasons to justify pleasurable purchases, especially during financial anxiety – and premium food is powerful because the justification is built into the product. The organic label, the sustainability story, the wellness framing – they all dissolve guilt before it even kicks in.

Consumed in the kitchen and again on the feed

There’s a reason this trend is accelerating now. Many premium food purchases are consumed twice – once physically and once digitally. The Erewhon smoothie purchase isn’t really about the drink; it can be as much about the content as the drink. The tinned fish board is plated for Instagram before anyone takes a bite.

Social media doesn’t just amplify the trend; it completes it. When you post a photo or video of the smoothie, you’re broadcasting that you value wellness, quality and intentionality. In a cultural moment when flaunting a designer bag feels tone-deaf, food provides perfect cover. It’s the safest flex there is. It’s no surprise that one YouTube video of an Erewhon haul by food creator @KarissaEats has drawn over 14 million views.

All of this raises a fair question: Does the growing focus on the “K-shaped economy” explain this boom? As many economists see it, low- and middle-income shoppers are increasingly pulling back, as they face an affordability squeeze from health care to housing and education. But wealthier consumers are picking up the slack and then some, splurging on luxury and powering gross domestic product growth.

In this scenario, premium food thrives because it’s still affordable for the people who are doing fine, even as everyone else cuts back. That’s partly true. But this explanation doesn’t account for another shift – why affluent consumers are foregoing splurges on items like designer handbags in favor of premium groceries.

This is why the virtue framing matters so much. If the question was purely about having money to spend, traditional luxury would be booming as well. It isn’t. A case in point is LVMH, the conglomerate behind Louis Vuitton and Dior, which saw its fashion division’s profits decline 13% across all of 2025.

Even consumers who are flush with disposable income need psychological permission to spend during anxious times. The premium food phenomenon is about why food has become the thing they choose – not about who can afford to splurge.

And when a smoothie becomes a status symbol, it tells us something about economic security more broadly. Food prices have climbed nearly 30% since 2019, outpacing 23% for overall consumer prices, according to the Bureau of Labor Statistics. For a family stretching a tight grocery budget, $22 isn’t a smoothie. It’s dinner.

The need for control, the desire for identity, the comfort of virtue permission — these are universal. A single mother working two jobs feels the same craving for agency as the influencer filming her grocery haul. It’s just that the purchases that satisfy those needs are increasingly constrained by price. The justification only works if you can afford your indulgence.

What’s really in the cart

The next time you’re in a grocery store and you reach for something a little more expensive than what you might need, you should pause – not to put it back, but to think about what you’re actually reaching for.

Chances are it isn’t really about the product. It’s about the feeling of choosing something when the world feels out of hand.

A $22 smoothie is never just a smoothie. It’s what people seek out when they need permission to feel OK.

![]()

The authors do not work for, consult, own shares in or receive funding from any company or organization that would benefit from this article, and have disclosed no relevant affiliations beyond their academic appointment.

– ref. Why Americans are buying $22 smoothies despite feeling terrible about the economy – https://theconversation.com/why-americans-are-buying-22-smoothies-despite-feeling-terrible-about-the-economy-279425