Source: The Conversation – USA (2) – By James R. Elliott, Professor of Sociology, Rice University

Ten years ago, the infamous Tax Day storm swamped the Houston area with off-the-charts rainfall. Nearly 2 feet of rain fell in less than 15 hours in parts of the region, starting on April 17, 2016. The rain flooded thousands of homes and exceeded a 10,000-year event at some gauges.

But the storm’s damage could have been much worse.

The brunt of the deluge hit Waller County, west of Houston, where the impact was largely on farms and ranches. Had the same volume of water fallen just a few miles to the east, over Houston’s dense urban core, the tragedy would have been far worse.

What made the Tax Day flood so devastating was its speed. It was a flash event that struck overnight, without warning.

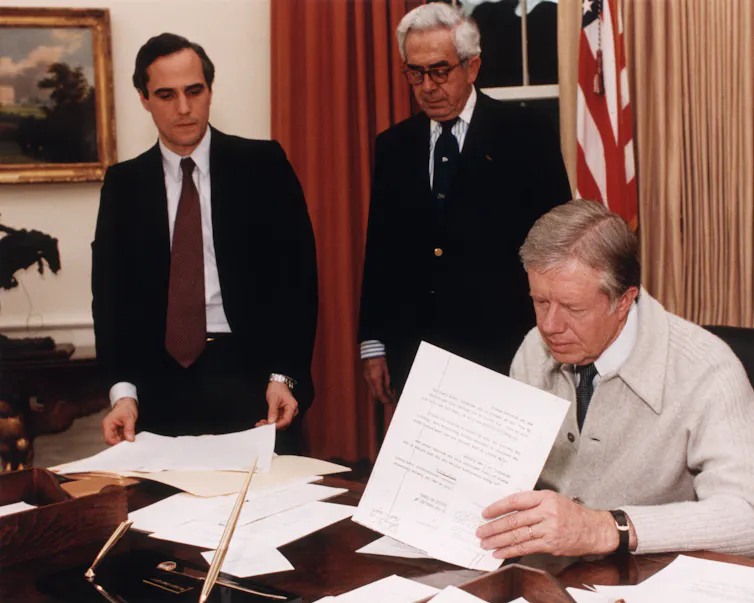

AP Photo/David J. Phillip

At Rice University’s Center for Coastal Futures and Adaptive Resilience, we used state-of-the-art hydrological modeling to see what would happen if a similar storm struck more populated parts of the city today.

The results suggest that current flood planning strategies in Houston – and similar strategies used in communities across the U.S. – are dangerously narrow in how they consider what’s at risk. In today’s world of increasingly extreme downpours, preparing for flood disasters means preparing for more than just what’s probable – it means also preparing for extreme situations that are less likely but could be far more dangerous.

The perils of relying on probability

In the United States, flood risk is publicly defined by maps produced by the Federal Emergency Management Agency. These maps, suggesting which properties face flood risks, guide everything from emergency planning to decisions related to the National Flood Insurance Program.

However, FEMA’s risk maps are based on probabilistic modeling that typically stops at the 500-year flood risk level, meaning a property has 0.2% odds – a 1 in 500 chance – of being flooded in any given year. There is a mathematical reason for doing this: There are simply too few cases to reliably estimate probabilities below that threshold.

Consequently, “off the charts” events like the Tax Day flood are effectively ignored in official planning. Authorities often prefer to view them as unrealistic until more data is collected – a process that can take decades. Yet, parts of Houston suffered another 1,000-year event the following year when remnants of Hurricane Harvey stalled over the city in 2017, and Houston has seen other 500-year floods in recent years.

AP Photo/David J. Phillip

The Dutch, who are global leaders in flood science by necessity, since more than half their country is at risk of flooding, use a different approach. They take what they consider “worst credible floods” seriously. These are events that extend beyond standard probability models but are still considered by experts to be realistic, or credible, possibilities.

If the Tax Day storm hit today

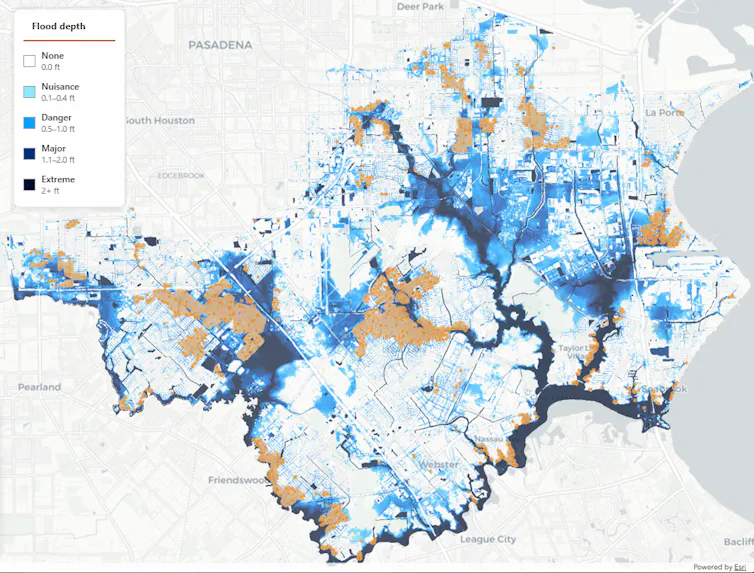

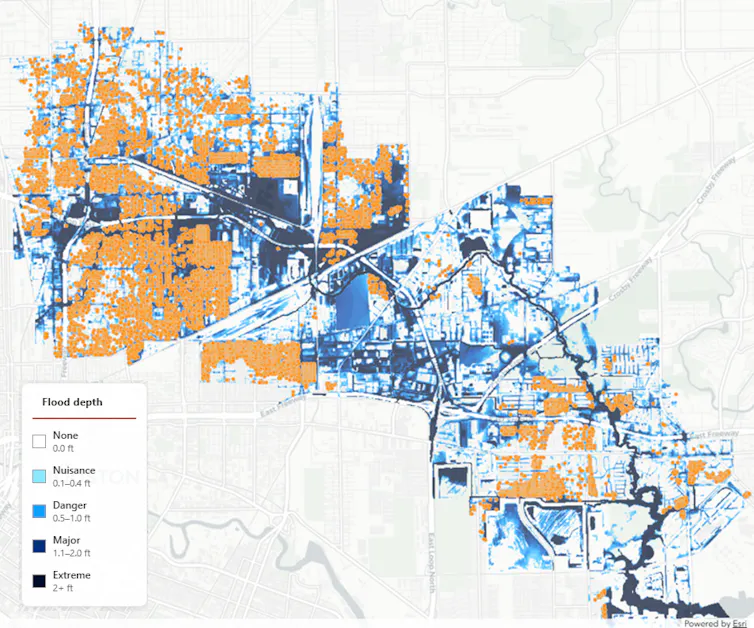

To get a clearer picture of the Houston area’s credible risks, we simulated the impact of the Tax Day flood from rainfall alone if the storm had centered over two different watersheds in Houston’s Harris County.

The suburban risk: Clear Creek runs through a middle-class suburban area near NASA’s Johnson Space Center. Vast stretches of suburban concrete block its natural drainage, and thousands of homes have been built along its winding, sluggish tributaries.

Even moderate rainfall can quickly transform these waterways into destructive torrents that overflow into nearby townships, including Friendswood and League City.

Our simulations show that if the Tax Day storm had centered over the Clear Creek area, more than 13,500 properties with homes would have quickly flooded with at least 6 inches of water. Above 6 inches is the danger zone where roads become unsafe for most passenger vehicles. In a home, when drywall gets wet it begins to wick water upward, requiring tear-outs. Even in elevated homes, that much water can damage equipment and contaminate water systems. In some areas, our simulations indicate the water depths would have exceeded 3 feet within hours.

Center for Coastal Futures and Adaptive Resilience/Rice University

The financial “what if” is even more staggering. Our analysis of publicly available data indicates that 92% of homes in Clear Creek’s flood zone likely have no flood insurance, and 52% fall outside the 100-year flood plain in FEMA’s latest proposed maps. Even with FEMA’s latest map updates, most mortgage holders would not be required to carry flood insurance on homes in the area that would have flooded.

The equity gap: When we moved the storm over Hunting Bayou, a working-class area in inner Houston populated largely by residents of color, the results were even more severe. Here, flooding represents the legacy risk of midcentury urbanism, where a naturally shallow, sluggish stream was penned in by industrial warehouses and tightly packed residential streets long before modern drainage standards existed, restricting the waterway’s ability to expand and meander gracefully.

Because much of the area has flat, poorly draining soils, this watershed has become a bottleneck that can rapidly overflow during heavy rains. We found the Tax Day storm would have flooded more than half of all residential lots there with at least 6 inches of water, compared to 16% of residential lots in the Clear Creek area. And flood insurance in the Hunting Bayou area is nearly nonexistent.

Center for Coastal Futures and Adaptive Resilience/Rice University

Both simulations, viewable through our interactive online tool at the Center for Coastal Resilience and Adaptive Futures, reveal a sobering reality: Devastation that local, state and federal government planning dismisses as improbable is, in fact, entirely possible.

When FEMA or state planners prioritize probabilistic mapping over “worst-case” modeling like we conducted, they treat historic deluges like the Tax Day flood as improbable anomalies rather than predictable consequences of a changing climate and rapid urban expansion. Moreover, unlike hurricanes, which typically arrive with several days’ notice, the sudden destructive force of “normal” storm systems like the Tax Day storm is discounted.

Learning from ‘worst cases’

The levels of destruction we simulated could easily occur in the coming years as global temperatures rise and storm intensity increases.

To prepare, U.S. emergency planners and flood authorities can look to three lessons from the Dutch planners’ possibilistic playbook.

Embrace flexible planning: Overly detailed plans can create a false sense of control and end up paying less attention to neighborhoods considered to be outside the flood plain. Simple and flexible plans that empower local officials to repurpose everyday assets in real time work best. That might include preemptively mobilizing high-water rescue vehicles into geographically vulnerable areas.

Map potential disruption, not just probability: Extending flood planning beyond who is in or out of the 100-year flood zone can also help identify where road networks and critical infrastructure are likely to fail during extreme events. This approach also helps identify infrastructure such as public parks that can double as temporary water retention basins.

Raise public risk perception: Residents can respond more effectively when local flood authorities share plans for “what if” scenarios with the public, along with guidance on how best to prepare.

The 10th anniversary of the Tax Day flood is a reminder of why it’s crucial to stop ignoring improbable events and start scientifically leveraging the possible to make all cities safer in an age of worsening climate change.

![]()

Dominic Boyer receives funding from the National Science Foundation and the John S. Guggenheim Foundation.

James R. Elliott and Yilei Yu do not work for, consult, own shares in or receive funding from any company or organization that would benefit from this article, and have disclosed no relevant affiliations beyond their academic appointment.

– ref. What if Texas’ destructive Tax Day flood had centered on inner Houston instead? It’s why cities should plan for the improbable – https://theconversation.com/what-if-texas-destructive-tax-day-flood-had-centered-on-inner-houston-instead-its-why-cities-should-plan-for-the-improbable-279964