Source: The Conversation – USA – By Daniel Thomas Potts, Professor of Ancient Near Eastern Archaeology and History, New York University

The British- and American-backed plot to overthrow Iran’s prime minister in 1953 laid the groundwork for the 1979 Iran hostage crisis and decades of hostility with the U.S. that have now culminated in a war launched on Iran by the U.S. and Israel.

Many Americans only know the anger and tension with Iran that has grown from those roots set down during the middle of the last century. But as an archaeologist who has spent over 50 years specializing in Iran, and from my research on Iranian history in the context of changes undergone by Iran’s nomadic population through time, I believe it is worth recalling the time when the two countries had a distinctly different relationship.

In the 1800s, American missionaries journeyed to what was then called Persia.

The missionaries helped build important institutions – schools, colleges, hospitals and medical schools – in Persia, many of which still exist.

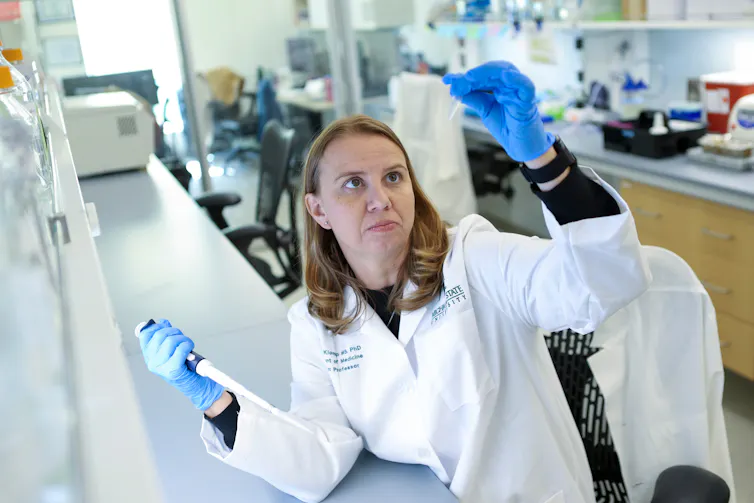

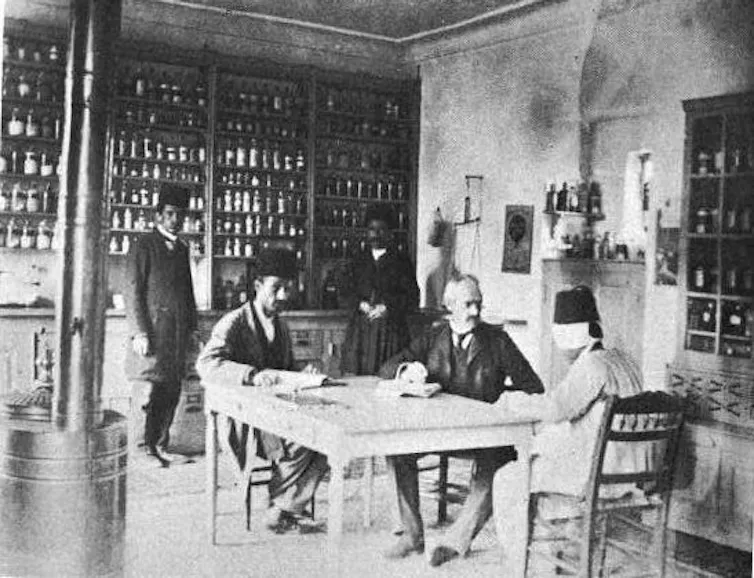

Dr. Joseph Plumb Cochran, an American physician fluent in Persian, Turkish, Kurdish and Assyrian, founded a hospital in Urmia in 1879, as well as Iran’s first medical school. When Cochran died at Urmia in northwestern Iran in 1905, over 10,000 people attended his funeral.

This image clashes with most American stereotypes of Iran and its people, and is at odds with decades of anti-Iranian sentiment emanating from Washington.

Iran and the United States, in fact, have a deep history of mutual respect and friendship.

From 1834, when the first Protestant American mission was established in Urmia, until 1953, when the CIA’s involvement in Iran’s internal affairs set the United States on the road to conflict with Tehran, Americans were the good guys.

Wikipedia

Imperial bad guys

For years, Americans have seen images of Iranians shouting “Death to America.” President Donald Trump returned the sentiment during his first term, vowing to bring Iran death and destruction. And on Feb. 28, 2026, after weeks of threats and military preparation, the U.S. and Israel attacked Iran, killing Supreme Leader Ali Khamenei; that war continues to this day.

But before all that happened, when Americans were the good guys, there were other countries who were instead manipulators and who exerted undue influence over Iran.

The bad guys, at whose hands Iran suffered most, were Russia and Great Britain. Those two nations – often at the invitation of Iran’s leaders – economically exploited Persia to further their own imperial ambitions, using sustained diplomatic, military and economic pressure.

After two ill-judged wars fought against Russia – the First (1804-1813) and Second Russo-Persian Wars (1826-1828) – Persia (the name Iran was officially adopted in 1935) lost large amounts of territory to the czar.

Much later, Russia found another means of exerting control over the Persian crown, loaning millions of rubles to its rulers, like Mozaffar ed-Din Shah, who reigned from 1896-1902 and needed capital to fund his lavish lifestyle.

With the exception of the Anglo-Persian War (1856-1857), Persian relations with Great Britain were less openly hostile. But what they lacked in martial vigor was more than compensated for by economic exploitation.

Toward the end of the 19th century, the shah granted exclusive concessions to the British for everything from telegraph lines to tobacco. Rights to Iran’s oil were given to the Anglo-Persian (later Anglo-Iranian) Oil Company.

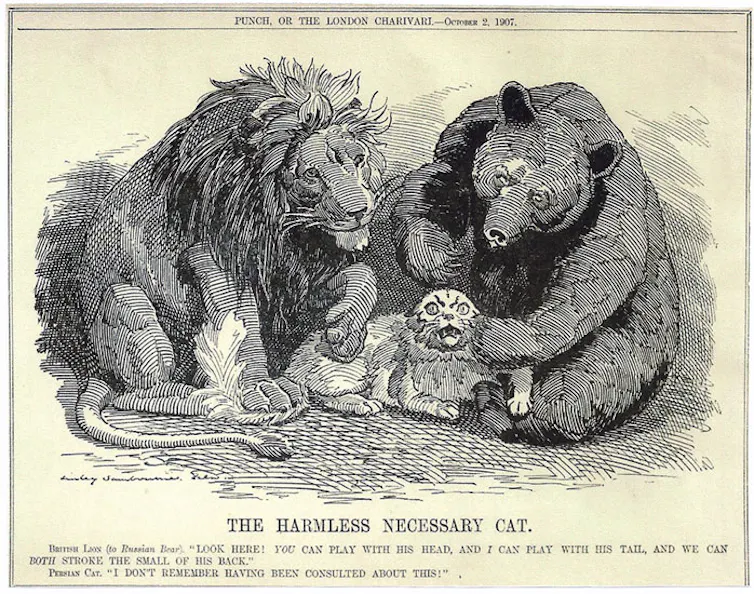

So assured were Britain and Russia in their control of Persia that, in 1907, they signed the infamous Anglo-Russian Convention. That agreement divided the country – unbeknownst to its Parliament, let alone its inhabitants – into Russian, British and “neutral” spheres of influence. After it became public it provoked the outrage of ordinary Persians and the international community at large.

Punch/Pushkin House

America the good

Iran’s relations with the United States were completely different.

The 19th- and early 20th-century history of British and Russian imperial ambitions and involvement in Iran put Iran in a dependent, exploited position at the hands of the governments of these two countries.

But the presence in Iran of American missionaries and, later, invited government technocrats, was of an entirely different quality. These were Americans offering aid, with no expectation of advantage to be gained officially for the United States government.

American Presbyterian missionary efforts in Iran began in 1834 and focused on education, with 117 schools established around Urmia by 1895. Efforts were also directed at medical and social welfare. These were nongovernmental missions. The U.S. government was conspicuous by its absence in Iran and Iranian affairs.

By the late 19th century, the Presbyterian Board of Foreign Missions had opened new stations in cities across northern Iran, from Tehran to Mashhad. American diplomatic relations with Persia were established in 1883. A decade later the American Presbyterian Hospital was founded in Tehran by John G. Wishard.

After the First World War, Presbyterian schools for both boys and girls proliferated, the most famous of which were the American College of Tehran for boys, established in 1925, and Iran Bethel School for girls.

In 1910, the Persian Parliament, aware that the country’s finances were in disarray, invited the U.S. to identify a “disinterested American expert as treasurer-general to reorganize and conduct collection and disbursement of revenue.”

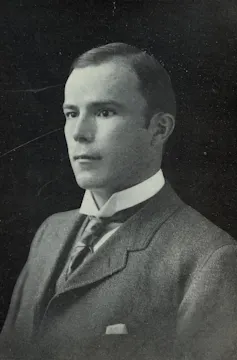

Despite Russian attempts to block the initiative, W. Morgan Shuster, a distinguished career civil servant, was appointed by Persia in February 1911. He arrived in Tehran in May, bringing with him four other Americans.

The mission was a failure, lasting only eight months, and, unsurprisingly, was adroitly sabotaged by the combined efforts of British and Russian diplomats in Tehran.

Wikipedia

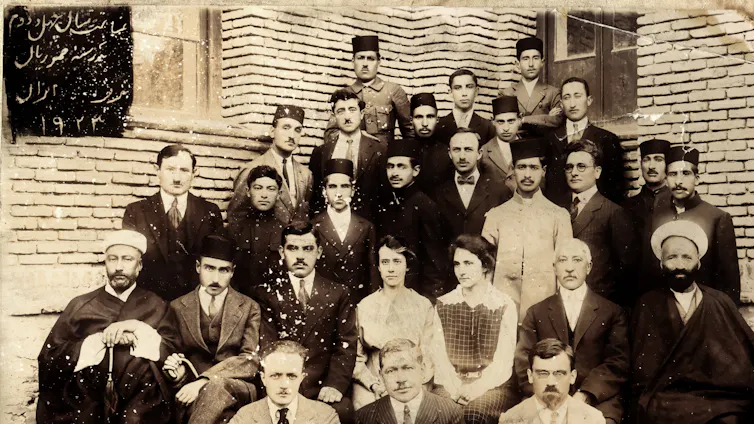

The country’s financial situation after the First World War was still precarious. With none of the colonialist baggage associated with the two European superpowers, America was turned to, almost as a last resort, to fix what ailed Iran. Riza Shah, father of the last shah, appointed an American, Arthur C. Millspaugh, as the administrator-general of the finances of Persia.

When Millspaugh arrived in Tehran in 1922, a newspaper editorial addressed him with these words: “You are the last doctor called to the death-bed of a sick person. If you fail, the patient will die. If you succeed, the patient will live.”

Despite his often testy relations with foreigners, Riza Shah acknowledged Millspaugh’s American Financial Mission was “the last hope of Persia.” The fact that the mission was far from an unqualified success does not detract from its importance. Nor did it diminish America’s image as an honest broker in Iranian eyes, in contrast to that of Russia and Great Britain.

Of course, not every Iranian-American interaction during this period was positive. Robert Imbrie, the American consul in Tehran, was brutally murdered in 1924, allegedly because a fanatical religious leader accused him of being a Baha’i and poisoning a well.

Riza Shah used the episode to crack down on dissidents and impose strict controls on public gatherings.

shahrefarang.com

America the bad

America’s benign image in Iran was forever shattered in 1953 when the CIA, working with Great Britain, engineered a coup against Mohammad Mossadegh, the democratically elected prime minister who had nationalized the Anglo-Iranian Oil Company.

Even though the overthrow of Mossadegh damaged Iranian trust in America, the years just prior to Iranian revolution in 1979 saw the number of Iranian students in the United States steadily rise.

Over one-third of the approximately 100,000 Iranian students pursuing university degrees abroad in 1977 were in the U.S. By the time of the Islamic revolution two years later, that number had climbed to 51,310, making Iran by far the biggest single source of foreign students in America, with 17% of the total foreign student population. The next-largest contributor of foreign students, Nigeria, accounted for only 6%.

“Iranian students have been here for nearly a century … there are deep and abiding connections that reveal themselves when you look at the historical record,” researcher Steven Ditto, who wrote a report on Iranian students in the U.S., told The Washington Post in 2017.

The legacy of American goodwill, personal friendship and doing the right thing by Iran has not been completely lost, although the war now underway may make it seem as though America’s good relationship with Iran has been lost irretrievably.

Deep friendships dating back well over a century can withstand a great deal. A reservoir of goodwill and affection may lie dormant while political storms rage. Iran and America were good friends in the past, and for good reason. I believe that Americans would do well to remember that.

This is an updated version of an article originally published on Aug. 19, 2020.

![]()

Daniel Thomas Potts does not work for, consult, own shares in or receive funding from any company or organization that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment.

– ref. Decades of hostility between Iran and the US were preceded by a little-remembered century-long friendship – https://theconversation.com/decades-of-hostility-between-iran-and-the-us-were-preceded-by-a-little-remembered-century-long-friendship-279636