Source: The Conversation – USA (2) – By Carson MacPherson-Krutsky, Research Associate, Natural Hazards Center, University of Colorado Boulder

Many Coloradans may never get an alert that could save their life during a disaster.

And the alerts that go out may not easily be understood by the people who do get them.

We are social scientists who study emergency alerts and warnings, the challenges that exist in getting emergency information to the public, and ways to fix these issues.

Research two of us – Carson MacPherson-Krutsky and Mary Painter – did with researcher Melissa Villarreal shows only 4 in 10 Colorado residents have opted in to receive local emergency alerts. And many alerts may not be written with complete information, translated into the languages residents speak, or put into formats accessible to people with vision or hearing loss. This means some of our most vulnerable neighbors could miss crucial information during a crisis.

A decentralized alert system

Alerts are complex. They can come from a variety of official sources, including 911 centers, weather forecast centers and others. Alerts can also come in many forms, ranging from emails and texts to sirens and radio broadcasts.

Our study, mandated and funded by Colorado House Bill 23-1237, focused on understanding alert systems in Colorado after the Grizzly Creek Fire in 2020 and the Marshall Fire in 2021.

Helen H. Richardson/MediaNews Group/The Denver Post via Getty Images

These fires were destructive and highlighted issues related to emergency alerting. Alerts about the fires and calls to evacuate were delayed and inconsistently received. Most were only available in English despite census data that shows 1 in 10 residents of Eagle and Garfield counties speak Spanish at home and only “speak English less than ‘very well.’”

The resulting legislation focused on how to make emergency alerts in Colorado accessible to all, but especially those with disabilities and with limited-English proficiency.

As social scientists who study disasters, we know that hazards, like earthquakes and wildfires, reveal inequities and that certain groups fare worse and take longer to recover. People with disabilities have higher rates of death from disasters. This is not because these populations are inherently less able to respond, but because emergency planning and systems may not account for their specific needs.

Our Colorado study used interviews and a statewide survey of 222 officials that send alerts to better understand the challenges of providing alerts across the state and reaching at-risk populations.

A patchwork system

The state of Colorado does not have a uniform alert system. Local areas determine the alert systems they will use.

Some alerts get sent through systems that require people to opt in. This means that people sign up and choose to receive notifications. Neighboring counties often use different opt-in alert systems, meaning individuals who travel to different counties for work or recreation may need to register for multiple systems. Examples of these systems include Everbridge, used by Boulder County, and CodeRed, used by Adams and Park counties.

Michael Ciaglo/Stringer via Getty Images

The success of these systems in an emergency relies on the community signing up for alerts.

We found that registering for alert systems was a barrier for everyone, but especially those with limited-English proficiency and with disabilities. This is because they may not be aware of the systems that are accessible to them or they are wary of providing personal information, and depending on their location, alerts may only be offered in English.

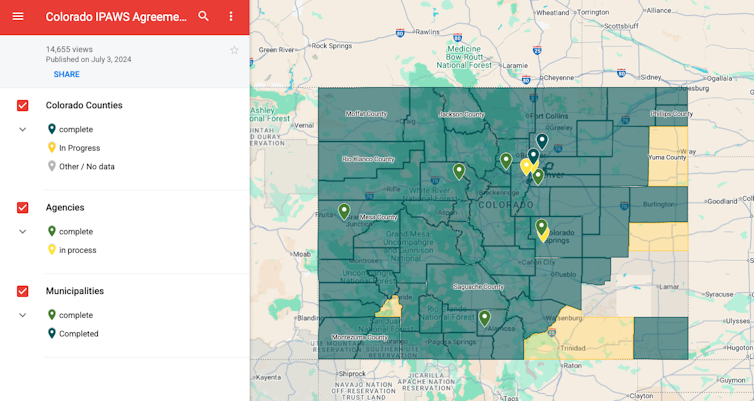

Colorado Division of Homeland Security & Emergency Management, CC BY-ND

Another system is “opt out,” meaning people will receive alerts by default unless they turn them off. These include Wireless Emergency Alerts, or WEAs. These messages get broadcast through cellphone towers to phones in a specific geographic area. So if you have a cellphone in a WEA alert boundary, you will get an alert. WEAs are used in Colorado to target specific regions in danger, such as an area that needs to evacuate or for an Amber Alert.

There is no national standard or guidance for opt-in or opt-out systems, which can lead to inconsistencies in how people get alerts.

Lack of resources limits alerting authorities

We found that though authorities often want to provide alerts in other languages and accessible formats, they have significant resource constraints. Time, staff, money or training can all limit the level of accessibility they can provide.

Sixty-four percent of the authorities we surveyed said they lacked funding to make alerts more inclusive.

More than a third of our respondents didn’t know if their systems could provide alerts in languages other than English or for people with disabilities. This speaks to a need for better training on how these systems work and how to use them effectively.

An alert is complete if it includes information about the source, hazard, location and time. Recently, researchers found that fewer than 10% of all Nationwide Wireless Emergency Alerts issued from 2012 to 2022 were complete.

One of us – Micki Olson – worked with the federal government to develop the Message Design Dashboard to help alerting authorities craft clear and comprehensive emergency messages.

Fifty-six out of 64 counties in Colorado are an Integrated Public Alert and Warning System authority, which means they can send alerts across multiple platforms at once. This can improve alert access since it broadens who alerts reach.

Not all counties have this option, and even the ones who do, don’t always use it. In our study, authorities noted limited staff capacity, funds and lack of time prevents them from getting or using the IPAWS system.

“We simply do not have the resources, both financial and people, to deploy all of these systems,” a survey respondent from Gunnison County said.

Alert systems were not built to be accessible

The final issue we identified is that alert systems were not developed with accessible options and functionality like video or image options. For example, people who are blind or have low vision won’t have access to a message unless they enable text-speech features on their phone in advance.

The WEA system only allows alerts to be sent in English or Spanish. Characters like accents and tildes cannot be included. Expansion of language options was planned but is now on hold for unclear reasons. Some counties have the resources to make alerts available in additional languages, but most do not.

Almost 900,000 Coloradans speak a language other than English. According to the Migration Policy Institute, more than 230,000 Coloradans have difficulty comprehending and communicating in English.

Where do we go from here?

Recent events, including the Palisades and Eaton fires in California and the devastating floods in Kerr County, Texas, demonstrate how critical it is that timely and accessible emergency alerts reach everyone, but especially the most vulnerable individuals.

However, these systems are complex, and everyone from individuals to local government can play a part in improving them.

-

Federal and local governments can allocate funds to update and standardize systems. They can also implement training and procedures to ensure alerts are effective and inclusive.

-

Authorities that send alerts can partner more closely with trusted community organizations and networks to reach diverse audiences.

-

Researchers can identify how to better tailor systems to meet community needs.

-

Individuals can learn about and sign up for alerts. To do so, visit local government websites or enter “emergency alerts” and the name of your county or city in an online search.

![]()

Carson MacPherson-Krutsky works for the Natural Hazards Center at the University of Colorado Boulder. Through the Center, she receives funding from the State of Colorado, NSF, USACE, USGS and others.

Mary Angelica Painter works for the Natural Hazards Center at the University of Colorado Boulder. Through the Center, she receives funding from agencies including NSF, USACE, USGS and others.

Micki Olson has received funding from FEMA and NOAA.

– ref. Emergency alerts may not reach those who need them most in Colorado – https://theconversation.com/emergency-alerts-may-not-reach-those-who-need-them-most-in-colorado-262308