Source: The Conversation – USA (2) – By Alexandra A Phillips, Assistant Teaching Professor in Environmental Communication, University of California, Santa Barbara

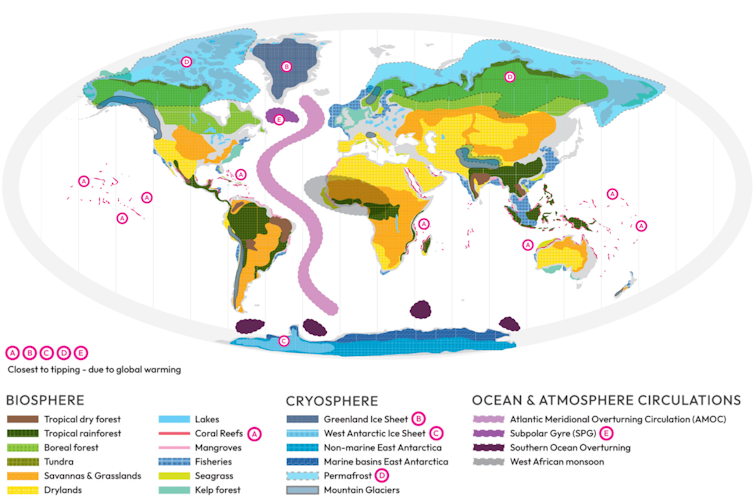

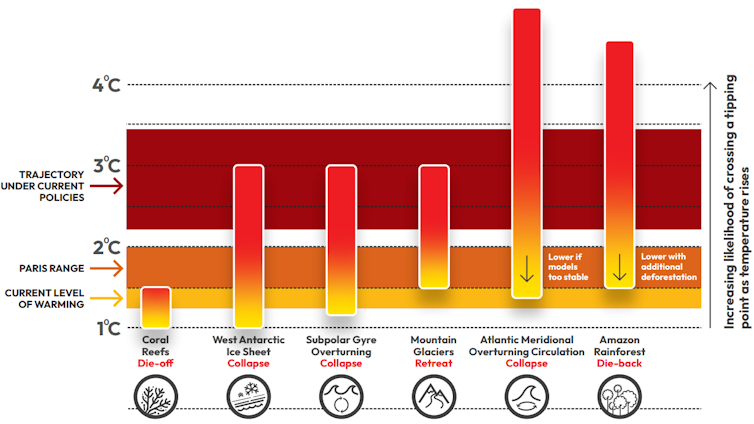

As the planet warms, it risks crossing catastrophic tipping points: thresholds where Earth systems, such as ice sheets and rain forests, change irreversibly over human lifetimes.

Scientists have long warned that if global temperatures warmed more than 1.5 degrees Celsius (2.7 Fahrenheit) compared with before the Industrial Revolution, and stayed high, they would increase the risk of passing multiple tipping points. For each of these elements, like the Amazon rain forest or the Greenland ice sheet, hotter temperatures lead to melting ice or drier forests that leave the system more vulnerable to further changes.

Worse, these systems can interact. Freshwater melting from the Greenland ice sheet can weaken ocean currents in the North Atlantic, disrupting air and ocean temperature patterns and marine food chains.

Global Tipping Points Report, CC BY-ND

With these warnings in mind, 194 countries a decade ago set 1.5 C as a goal they would try not to cross. Yet in 2024, the planet temporarily breached that threshold.

The term “tipping point” is often used to illustrate these problems, but apocalyptic messages can leave people feeling helpless, wondering if it’s pointless to slam the brakes. As a geoscientist who has studied the ocean and climate for over a decade and recently spent a year on Capitol Hill working on bipartisan climate policy, I still see room for optimism.

It helps to understand what a tipping point is – and what’s known about when each might be reached.

Tipping points are not precise

A tipping point is a metaphor for runaway change. Small changes can push a system out of balance. Once past a threshold, the changes reinforce themselves, amplifying until the system transforms into something new.

Almost as soon as “tipping points” entered the climate science lexicon — following Malcolm Gladwell’s 2000 book, “The Tipping Point: How Little Things Can Make a Big Difference” — scientists warned the public not to confuse global warming policy benchmarks with precise thresholds.

Sean Gallup/Getty Images

The scientific reality of tipping points is more complicated than crossing a temperature line. Instead, different elements in the climate system have risks of tipping that increase with each fraction of a degree of warming.

For example, the beginning of a slow collapse of the Greenland ice sheet, which could raise global sea level by about 24 feet (7.4 meters), is one of the most likely tipping elements in a world more than 1.5 C warmer than preindustrial times. Some models place the critical threshold at 1.6 C (2.9 F). More recent simulations estimate runaway conditions at 2.7 C (4.9 F) of warming. Both simulations consider when summer melt will outpace winter snow, but predicting the future is not an exact science.

Global Tipping Points Report 2025, CC BY-ND

Forecasts like these are generated using powerful climate models that simulate how air, oceans, land and ice interact. These virtual laboratories allow scientists to run experiments, increasing the temperature bit by bit to see when each element might tip.

Climate scientist Timothy Lenton first identified climate tipping points in 2008. In 2022, he and his team revisited temperature collapse ranges, integrating over a decade of additional data and more sophisticated computer models.

Their nine core tipping elements include large-scale components of Earth’s climate, such as ice sheets, rain forests and ocean currents. They also simulated thresholds for smaller tipping elements that pack a large punch, including die-offs of coral reefs and widespread thawing of permafrost.

Vardhan Patankar/Wikimedia Commons, CC BY-SA

Some tipping elements, such as the East Antarctic ice sheet, aren’t in immediate danger. The ice sheet’s stability is due to its massive size – nearly six times that of the Greenland ice sheet – making it much harder to push out of equilibrium. Model results vary, but they generally place its tipping threshold between 5 C (9 F) and 10 C (18 F) of warming.

Other elements, however, are closer to the edge.

Alarm bells sounding in forests and oceans

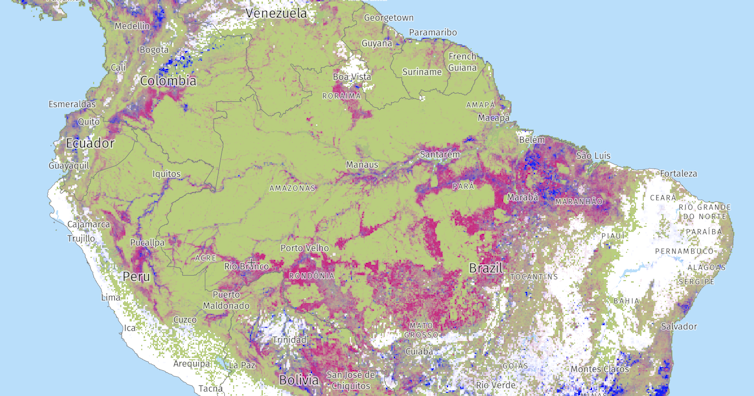

In the Amazon, self-perpetuating feedback loops threaten the stability of the Earth’s largest rain forest, an ecosystem that influences global climate. As temperatures rise, drought and wildfire activity increase, killing trees and releasing more carbon into the atmosphere, which in turn makes the forest hotter and drier still.

By 2050, scientists warn, nearly half of the Amazon rain forest could face multiple stressors. That pressure may trigger a tipping point with mass tree die-offs. The once-damp rainforest canopy could shift to a dry savanna for at least several centuries.

Rising temperatures also threaten biodiversity underwater.

The second Global Tipping Points Report, released Oct. 12, 2025, by a team of 160 scientists including Lenton, suggests tropical reefs may have passed a tipping point that will wipe out all but isolated patches.

Corals rely on algae called zooxanthellae to thrive. Under heat stress, the algae leave their coral homes, draining reefs of nutrition and color. These mass bleaching events can kill corals, stripping the ecosystem of vital biodiversity that millions of people rely on for food and tourism.

Low-latitude reefs have the highest risk of tipping, with the upper threshold at just 1.5 C, the report found. Above this amount of warming, there is a 99% chance that these coral reefs tip past their breaking point.

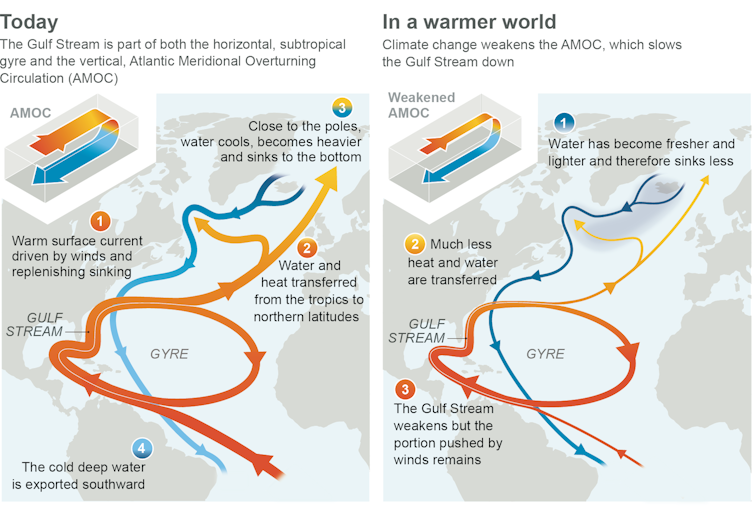

Similar alarms are ringing for ocean currents, where freshwater ice melt is slowing down a major marine highway that circulates heat, known as the Atlantic Meridional Overturning Circulation, or AMOC.

IPCC 6th Assessment Report

The AMOC carries warm water northward from the tropics. In the North Atlantic, as sea ice forms, the surface gets colder and saltier, and this dense water sinks. The sinking action drives the return flow of cold, salty water southward, completing the circulation’s loop. But melting land ice from Greenland threatens the density-driven motor of this ocean conveyor belt by dilution: Fresher water doesn’t sink as easily.

A weaker current could create a feedback loop, slowing the circulation further and leading to a shutdown within a century once it begins, according to one estimate. Like a domino, the climate changes that would accompany an AMOC collapse could worsen drought in the Amazon and accelerate ice loss in the Antarctic.

There is still room for hope

Not all scientists agree that an AMOC collapse is close. For the Amazon rain forest and the North Atlantic, some cite a lack of evidence to declare the forest is collapsing or currents are weakening.

In the Amazon, researchers have questioned whether modeled vegetation data that underpins tipping point concerns is accurate. In the North Atlantic, there are similar concerns about data showing a long-term trend.

Global Forest Watch, CC BY

Climate models that predict collapses are also less accurate when forecasting interactions between multiple tipping points. Some interactions can push systems out of balance, while others pull an ecosystem closer to equilibrium.

Other changes driven by rising global temperatures, like melting permafrost, likely don’t meet the criteria for tipping points because they aren’t self-sustaining. Permafrost could refreeze if temperatures drop again.

Risks are too high to ignore

Despite the uncertainty, tipping points are too risky to ignore. Rising temperatures put people and economies around the world at greater risk of dangerous conditions.

But there is still room for preventive actions – every fraction of a degree in warming that humans prevent reduces the risk of runaway climate conditions. For example, a full reversal of coral bleaching may no longer be possible, but reducing emissions and pollution can allow reefs that still support life to survive.

Tipping points highlight the stakes, but they also underscore the climate choices humanity can still make to stop the damage.

![]()

Alexandra A Phillips does not work for, consult, own shares in or receive funding from any company or organization that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment.

– ref. What are climate tipping points? They sound scary, especially for ice sheets and oceans, but there’s still room for optimism – https://theconversation.com/what-are-climate-tipping-points-they-sound-scary-especially-for-ice-sheets-and-oceans-but-theres-still-room-for-optimism-265183