Source: The Conversation – USA (3) – By Elinor Harrison, Faculty Affiliate, Philosophy-Neuroscience-Psychology, Washington University in St. Louis

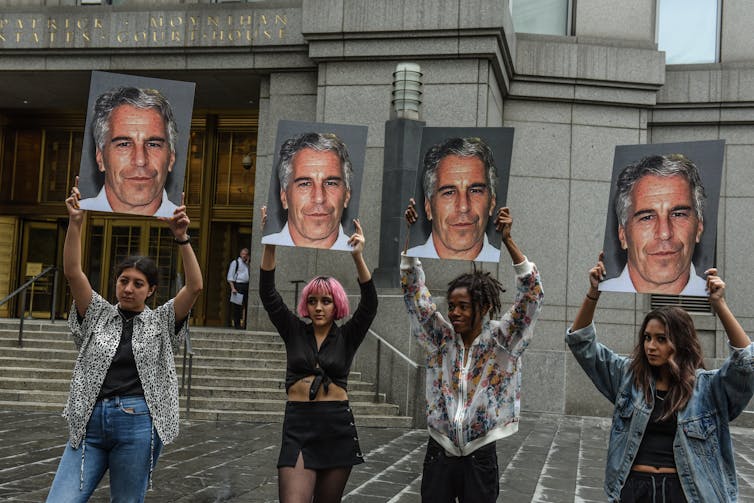

AP Photo/Gerald Herbert

On the first Sunday after being named leader of the Catholic Church in May 2025, Pope Leo XIV stood on the balcony of St. Peter’s Basilica in Rome and addressed the tens of thousands of people gathered. Invoking tradition, he led the people in noontime prayer. But rather than reciting it, as his predecessors generally did, he sang.

In chanting the traditional Regina Caeli, the pope inspired what some have called a rebirth of Gregorian chant, a type of monophonic and unaccompanied singing done in Latin that dates back more than a thousand years.

The Vatican has been at the forefront of that push, launching an online initiative to teach Gregorian chant through short educational tutorials called “Let’s Sing with the Pope.” The stated goals of the initiative are to give Catholics worldwide an opportunity to “participate actively in the liturgy” and to “make the rich heritage of Gregorian chant accessible to all.”

These goals resonated with me. As a performing artist and scientist of human movement, I spent the past decade developing therapeutic techniques involving singing and dancing to help people with neurological disorders. Much like the pope’s initiative, these arts-based therapies require active participation, promote connection, and are accessible to anyone. Indeed, not only is singing a deeply ingrained human cultural activity, research increasingly shows how good it is for us.

The same old song and dance

For 15 years, I worked as a professional dancer and singer. In the course of that career, I became convinced that creating art through movement and song was integral to my well-being. Eventually, I decided to shift gears and study the science underpinning my longtime passion by looking at the benefits of dance for people with Parkinson’s disease.

The neurological condition, which affects over 10 million people worldwide, is caused by neuron loss in an area of the brain that is involved in movement and rhythmic processing – the basal ganglia. The disease causes a range of debilitating motor impairments, including walking instability.

David L. Ryan/The Boston Globe via Getty Images

Early on in my training, I suggested that people with Parkinson’s could improve the rhythm of their steps if they sang while they walked. Even as we began publishing our initial feasibility studies, people remained skeptical. Wouldn’t it be too hard for people with motor impairment to do two things at once?

But my own experience of singing and dancing simultaneously since I was a child suggested it could be innate. While Broadway performers do this at an extremely high level of artistry, singing and dancing are not limited to professionals. We teach children nursery rhymes with gestures; we spontaneously nod our heads to a favorite song; we sway to the beat while singing at a baseball game. Although people with Parkinson’s typically struggle to do two tasks at once, perhaps singing and moving were such natural activities that they could reinforce each other rather than distract.

A scientific case for song

Humans are, in effect, hardwired to sing and dance, and we likely evolved to do so. In every known culture, evidence exists of music, singing or chanting. The oldest discovered musical instruments are ivory and bone flutes dating back over 40,000 years. Before people played music, they likely sang. The discovery of a 60,000-year-old hyoid bone shaped like a modern human’s suggests our Neanderthal ancestors could sing.

In “The Descent of Man,” Charles Darwin speculated that a musical protolanguage, analogous to birdsong, was driven by sexual selection. Whatever the reason, singing and chanting have been integral parts of spiritual, cultural and healing practices around the world for thousands of years. Chanting practices, in which repetitive sounds are used to induce altered states of consciousness and connect with the spiritual realm, are ancient and diverse in their roots.

Though the evolutionary reasons remain disputed, modern science is increasingly validating what many traditions have long held: Singing and chanting can have profound benefits to physical, mental and social health, with both immediate and long-term effects.

Physically, the act of producing sound can strengthen the lungs and diaphragm and increase the amount of oxygen in the blood. Singing can also lower heart rate and blood pressure, reducing the risk of cardiovascular diseases.

Vocalizing can even improve your immune system, as active music participation can increase levels of immunoglobulin A, one of the body’s key antibodies to stave off illness.

Singing also improves mood and reduces stress.

Studies have shown that singing lowers cortisol levels, the primary stress hormone, in healthy adults and people with cancer or neurologic disorders. Singing may also rebalance autonomic nervous system activity by stimulating the vagus nerve and improving the body’s ability to respond to environmental stresses. Perhaps this is why singing has been called “the world’s most accessible stress reliever.”

saac Buj/Europa Press via Getty Images

Moreover, chanting may make you aware of your inner states while connecting to something larger. Repetitive chanting, as is common in rosary recitation and yogic mantras, can induce a meditative state, inducing mindfulness and altered states of consciousness. Neuroimaging studies show that chanting activates brainwaves associated with suspension of self-oriented and stress-related thoughts.

Singing as community

Singing alone is one thing, but singing with others brings about a host of other benefits, as anyone who has sung in a choir can likely attest.

Group singing provides a mood boost and improves overall well-being. Increased levels of neurotransmitters such as dopamine, serotonin and oxytocin during singing may promote feelings of social connection and bonding.

When people sing in unison, they synchronize not just their breath but also their heart rates. Heart rate variability, a measure of the body’s adaptability to stress, also improves during group singing, whether you’re an expert or a novice.

In my own research, singing has proven useful in yet another way: as a cue for movement. Matching footfalls to one’s own singing is an effective tool for improving walking that is better than passive listening. Seemingly, active vocalization requires a level of engagement, attention and effort that can translate into improved motor patterns. For people with Parkinson’s, for example, this simple activity can help them avoid a fall. We have shown that people with the disease, in spite of neural degeneration, activate similar brain regions as healthy controls. And it works even when you sing in your head.

Whether you choose to sing with the pope or not, you don’t need a mellifluous voice like his to raise your voice in song. You can sing in the shower. Join a choir. Chant that “om” at the end of yoga class. Releasing your voice might be easier than you think.

And, besides, it’s good for you.

![]()

Elinor Harrison received funding from the National Institutes of Health, the National Endowment for the Arts and the Grammy Museum Foundation. She is affiliated with the International Association of Dance Medicine and Science and the Society for Music Perception and Cognition.

– ref. We are hardwired to sing − and it’s good for us, too – https://theconversation.com/we-are-hardwired-to-sing-and-its-good-for-us-too-262861