Source: The Conversation – USA – By Bert Johnson, Professor of Political Science, Middlebury College

A majority of Americans say they are “frustrated” or “angry” – or both – with Republicans and Democrats, according to the Pew Research Center. But that rarely translates into support for independent or third-party candidates.

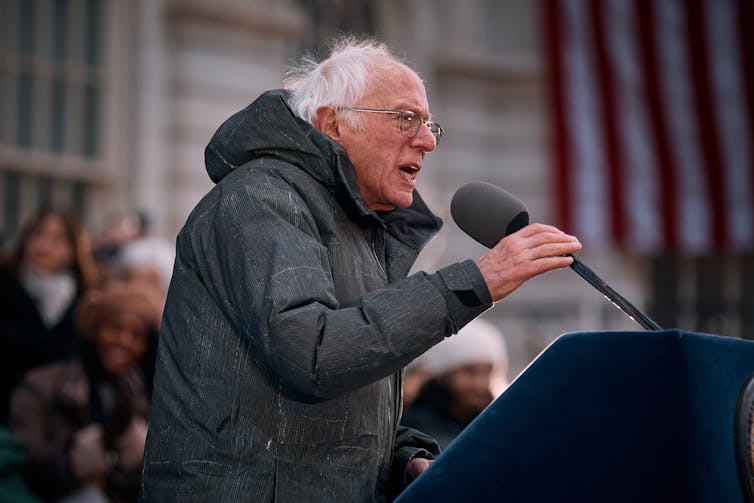

One exception has been in the Northeast. Angus King of Maine and Bernie Sanders of Vermont are the Senate’s only independents. King, along with Lowell Weicker of Connecticut and Lincoln Chafee of Rhode Island, represent three of the five independent and third-party governors elected nationwide since 1990. And of the 23 current independent or third-party state legislators in the country, excluding technically nonpartisan Nebraska, 14 of them, or 61%, are in New England.

As a political scientist who has taught in Vermont for two decades, I was intrigued by the question of why third-party and independent candidates are so successful, relatively speaking, in the Northeast? And can this region teach us lessons about broadening the choices available to voters?

Market forces

In their classic book “Third Parties in America,” Steven Rosenstone, Roy Behr and Edward Lazarus argue that alternative parties succeed where motivation for third-party voting is high, constraints against doing so are low, or both.

Those may sound like obvious points, but let’s explore them individually. First, motivation. Third parties do better when voters are frustrated with the two major parties and see them as incapable or unwilling to respond to their needs.

AP Photo/Andres Kudacki

In a polarized national political climate, New Englanders might appear to be good candidates for anger. Vermont gave Donald Trump his smallest share of the 2024 presidential vote of any state – less than a third. Massachusetts was not far behind.

This should not necessarily be interpreted as enthusiasm for the Democrats. Pew found that two-thirds of Democrats are frustrated with their own party.

Channeling some of this discontent, Vermont Gov. Phil Scott, although a Republican, has frequently criticized Trump and accused the president and other politicians in Washington of creating “chaos.”

Still, the idea that discontent explains New England’s openness to third parties and independents clashes with other pieces of the picture. Other states where most voters are hostile to Trump, such as California, Maryland and Illinois, have few successful third-party or independent candidates.

And the Northeast has been fairly friendly territory for third parties and independents in very different national contexts. New England elected far more third-party and independent legislators than other regions back in 2010 as well, at a point during Barack Obama’s presidency when political discontent was most famously centered within the conservative tea party movement.

Limits on minor parties

That brings us to the second possibility: constraints on third parties, or their absence.

Unlike parliamentary democracies, including Brazil and Spain, that use proportional representation – giving some proportion of the seats even to parties that garner small shares of the overall vote – the U.S. system is stacked against third parties because of its “first-past-the-post” electoral system, under which candidates can win with pluralities of the vote.

This type of voting encourages citizens to consider only the two major parties because other candidates are generally considered not to have any realistic shot of winning. This helps explain why Sanders ran for president as a Democrat in 2016 and 2020.

AP Photo/Doug Mills

In presidential voting, the Electoral College sinks third-party chances – even if they have wide support – if their voters are not concentrated enough to win individual states. Running as an independent in 1992, businessman Ross Perot won 19% of the national vote but received exactly zero votes in the Electoral College.

These constraints, while formidable in national politics, play out differently at the state and local levels. Absent the Electoral College, there is less of a guarantee that the Democrat and Republican will always be perceived as the two most viable candidates in local races, especially in regions with lopsided support for one party or the other.

In areas with overwhelming Democratic support, the next most viable option might not be a Republican but a progressive. In areas with overwhelming Republican support, Democrats could be less viable than libertarians.

Access to the ballot

But if this is true, why do we not see just as many third-party and independent victories in red states, such as Alabama and Mississippi, as we do in Vermont and Maine? The answer lies in a seemingly mundane but crucial factor: ballot access laws.

States set the rules governing which candidates quality for the ballot. In almost every state, Democrats and Republicans have advantages over other parties or independents. But in the Northeast it is easier for independents and candidates from other parties to get on the ballot.

In no New England state does an independent candidate for a state legislative seat have to collect more than 150 signatures to secure a ballot spot. In Georgia, by contrast, candidates must collect signatures equal to 5% of the total number of registered voters in the jurisdiction holding an election, which can translate into thousands of signatures.

To see the impact of ballot access rules on candidates outside of the major parties, you only need look at one of the few states outside of New England where such candidates have done as well: Alaska.

Alaska has long had ballot access rules that are among the most open in the nation. Candidates for state House races need only pay a filing fee of US$30 to get a ballot line, and it is nearly as easy for them to file as a recognized party or group.

That helps explain why five independents currently serve in the Alaska House, that the state elected as governor a third-party candidate in 1990 and an independent in 2014, and reelected U.S. Sen. Lisa Murkowski as a write-in candidate after she lost the Republican primary in 2010.

Ease of ballot access attracts outsider candidates, increases competition, and gives voters an outlet for their frustrations.

To sum up, if people want more choices in elections, they will need to change the rules.

![]()

Bert Johnson does not work for, consult, own shares in or receive funding from any company or organization that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment.

– ref. Why US third parties perform best in the Northeast – https://theconversation.com/why-us-third-parties-perform-best-in-the-northeast-273749