Source: The Conversation – Global Perspectives – By Renaud Joannes-Boyau, Professor in Geochronology and Geochemistry, Southern Cross University

When we think of lead poisoning, most of us imagine modern human-made pollution, paint, old pipes, or exhaust fumes.

But our new study, published today in Science Advances, reveals something far more surprising: our ancestors were exposed to lead for millions of years, and it may have helped shape the evolution of the human brain.

This discovery reveals the toxic substance we battle today has been intertwined with the human evolution story from its very beginning.

It reshapes our understanding of both past and present, tracing a continuous thread between ancient environments, genetic adaptation, and the unfolding evolution of human intelligence.

A poison older than humanity itself

Lead is a powerful neurotoxin that disrupts the growth and function of both brain and body. There is no safe level of lead exposure, and even the smallest traces can impair memory, learning and behaviour, especially in children. That’s why eliminating lead from petrol, paint and plumbing is one of the most important public health initiatives.

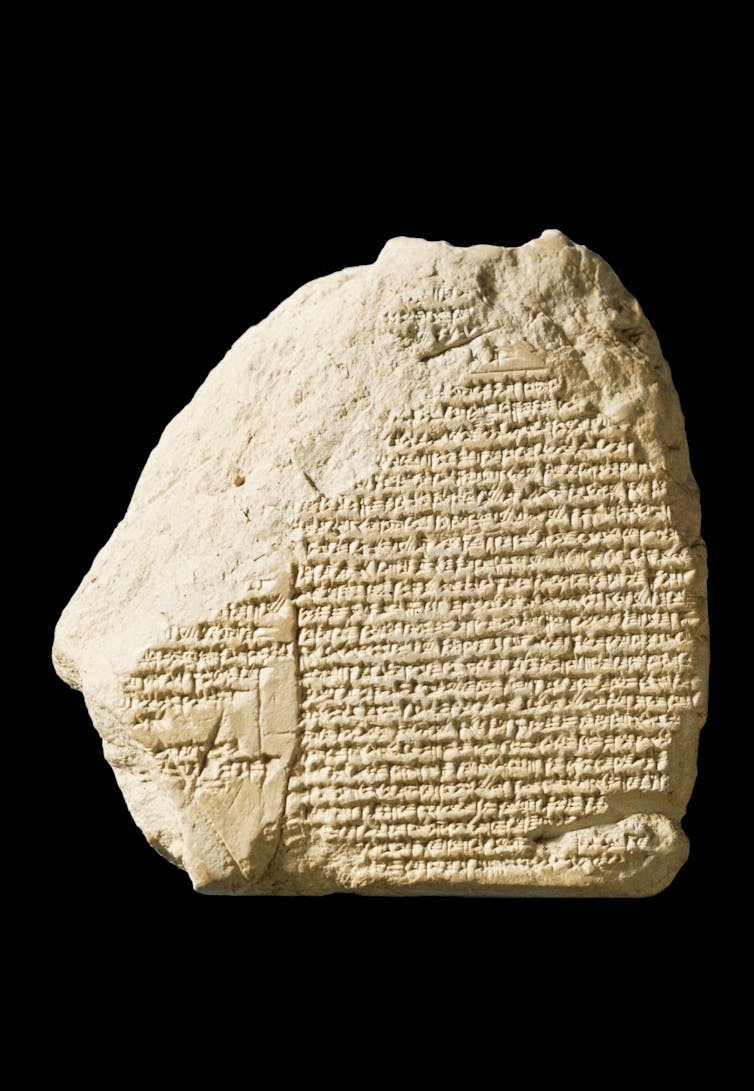

Yet while analysing ancient teeth at Southern Cross University, we uncovered something wholly unexpected: clear traces of lead sealed within the fossils of early humans and other ancestral species.

These specimens, recovered from Africa, Asia and Europe, were up to two million years old.

Using lasers finer than a strand of hair, we scanned each tooth layer by layer – much like reading the growth rings of a tree. Each band recorded a brief chapter of the individual’s life. When lead entered the body, it left a vivid chemical signature.

These signatures revealed that exposure was not rare or accidental; it occurred repeatedly over time.

Where did this lead come from?

Our findings show that early humans were never shielded from lead by the natural world. On the contrary, it was part of their world too.

The lead we found wasn’t from mining or smelting – those activities are from relatively recent human history.

Instead, it likely came from natural sources such as volcanic dust, mineral-rich soils, and groundwater flowing through lead-bearing rocks in caves. During times of drought or food shortage, early humans might have dug for water or eaten plants and roots that absorbed lead from the soil.

Every fossil tooth we study is a record of survival. A small diary of the early life of the individual, written in minerals instead of words. These ancient traces tell us that even as our ancestors struggled to find food, shelter and community, they were also navigating a world filled with unseen dangers.

From fossil teeth to living brain cells

To understand how this ancient exposure might have affected brain development, we teamed up with geneticists and neuroscientists, and used stem cells to grow tiny versions of human brain tissue, called brain organoids. These small collections of cells have many of the features of developing human brain tissue.

Alysson Muotri

We gave some of these organoids a modern human version of a gene called NOVA1, and others an archaic, extinct version of the gene similar to what Neanderthals and Denisovans carried. NOVA1 is a gene that orchestrates early neurodevelopment. It also initiates the response of brain cells to lead contaminants.

Then, we exposed both sets of organoids to very small, realistic amounts of lead – what ancient humans might have encountered naturally.

The difference was striking. The organoids with the ancient gene showed clear signs of stress. Neural connections didn’t form as efficiently, and key pathways linked to communication and social behaviour were disrupted. The modern-gene organoids, however, were far more resilient.

It seems that somewhere along the evolutionary path, our species may have developed a better built-in protection against the damaging effects of lead.

A story of struggle

The environment – complete with lead exposure – pushed modern human populations to adapt. Individuals with genetic variations that help them resist a threat are more likely to survive and pass those traits to future generations.

In this way, lead exposure may have been one of the many unseen forces that sculpted the human story. By favouring genes that strengthened our brains against environmental stress, it could have subtly shaped the way our neural networks developed, influencing everything from cognition to the early roots of speech and social connection.

This didn’t change the fact lead continues to be a toxic chemical. It remains one of the most damaging substances to our brains.

But evolution often works through struggle – even negative experiences can leave lasting, sometimes beneficial marks on our species.

New context for a modern problem

Understanding our long relationship with lead gives new context to a very modern problem. Despite decades of bans and regulations, lead poisoning remains a global health issue. Most recent estimates from UNICEF show one in three children worldwide still have blood lead levels high enough to cause harm.

Our discovery shows human biology evolved in a world full of chemical challenges. What changed is not the presence of toxic substances, but the intensity of our exposure.

When we look at the past through the lens of science, we don’t just uncover old bones, we uncover ourselves.

In the industrial age, we’ve massively amplified what used to be short and infrequent natural exposure. By studying how our ancestors’ bodies and genes responded to environmental stress, we can learn how to build a healthier, more resilient future.

![]()

Renaud Joannes-Boyau receives funding from the Australian Research Council.

Manish Arora receives funding from US National Institutes of Health. He is the founder of Linus Biotechnology, a start-up company that develops biomarkers for various health disorders.

Alysson R. Muotri does not work for, consult, own shares in or receive funding from any company or organisation that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment.

– ref. Human ancestors were exposed to lead millions of years ago, and it shaped our evolution – https://theconversation.com/human-ancestors-were-exposed-to-lead-millions-of-years-ago-and-it-shaped-our-evolution-267318