Source: – By Daniel S. Schiff, Assistant Professor of Political Science, Purdue University

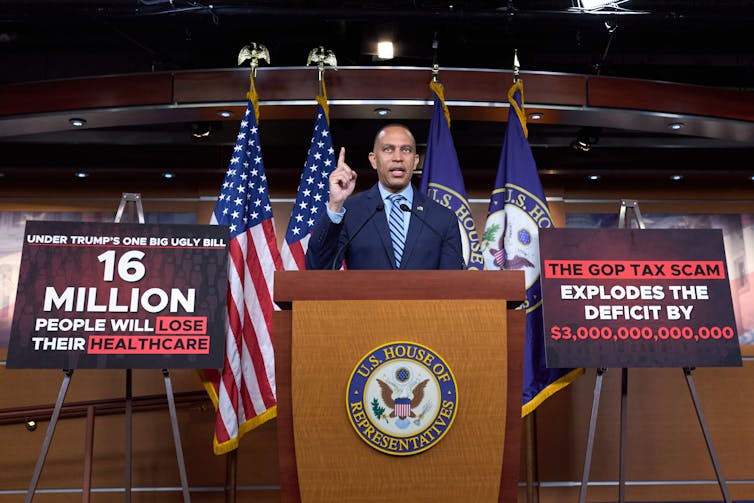

It is “the policy of the United States to promote AI literacy and proficiency among Americans,” reads an executive order President Donald Trump issued on April 23, 2025. The executive order, titled Advancing Artificial Intelligence Education for American Youth, signals that advancing AI literacy is now an official national priority.

This raises a series of important questions: What exactly is AI literacy, who needs it, and how do you go about building it thoughtfully and responsibly?

The implications of AI literacy, or lack thereof, are far-reaching. They extend beyond national ambitions to remain “a global leader in this technological revolution” or even prepare an “AI-skilled workforce,” as the executive order states. Without basic literacy, citizens and consumers are not well equipped to understand the algorithmic platforms and decisions that affect so many domains of their lives: government services, privacy, lending, health care, news recommendations and more. And the lack of AI literacy risks ceding important aspects of society’s future to a handful of multinational companies.

How, then, can institutions help people understand and use – or resist – AI as individuals, workers, parents, innovators, job seekers, students, employers and citizens? We are a policy scientist and two educational researchers who study AI literacy, and we explore these issues in our research.

What AI literacy is and isn’t

At its foundation, AI literacy includes a mix of knowledge, skills and attitudes that are technical, social and ethical in nature. According to one prominent definition, AI literacy refers to “a set of competencies that enables individuals to critically evaluate AI technologies; communicate and collaborate effectively with AI; and use AI as a tool online, at home, and in the workplace.”

AI literacy is not simply programming or the mechanics of neural networks, and it is certainly not just prompt engineering – that is, the act of carefully writing prompts for chatbots. Vibe coding, or using AI to write software code, might be fun and important, but restricting the definition of literacy to the newest trend or the latest need of employers won’t cover the bases in the long term. And while a single master definition may not be needed, or even desirable, too much variation makes it tricky to decide on organizational, educational or policy strategies.

Who needs AI literacy? Everyone, including the employees and students using it, and the citizens grappling with its growing impacts. Every sector and sphere of society is now involved with AI, even if this isn’t always easy for people to see.

Exactly how much literacy everyone needs and how to get there is a much tougher question. Are a few quick HR training sessions enough, or do we need to embed AI across K-12 curricula and deliver university micro credentials and hands-on workshops? There is much that researchers don’t know, which leads to the need to measure AI literacy and the effectiveness of different training approaches.

Measuring AI literacy

While there is a growing and bipartisan consensus that AI literacy matters, there’s much less consensus on how to actually understand people’s AI literacy levels. Researchers have focused on different aspects, such as technical or ethical skills, or on different populations – for example, business managers and students – or even on subdomains like generative AI.

A recent review study identified more than a dozen questionnaires designed to measure AI literacy, the vast majority of which rely on self-reported responses to questions and statements such as “I feel confident about using AI.” There’s also a lack of testing to see whether these questionnaires work well for people from different cultural backgrounds.

Moreover, the rise of generative AI has exposed gaps and challenges: Is it possible to create a stable way to measure AI literacy when AI is itself so dynamic?

In our research collaboration, we’ve tried to help address some of these problems. In particular, we’ve focused on creating objective knowledge assessments, such as multiple-choice surveys tested with thorough statistical analyses to ensure that they accurately measure AI literacy. We’ve so far tested a multiple-choice survey in the U.S., U.K. and Germany and found that it works consistently and fairly across these three countries.

There’s a lot more work to do to create reliable and feasible testing approaches. But going forward, just asking people to self-report their AI literacy probably isn’t enough to understand where different groups of people are and what supports they need.

Approaches to building AI literacy

Governments, universities and industry are trying to advance AI literacy.

Finland launched the Elements of AI series in 2018 with the hope of educating its general public on AI. Estonia’s AI Leap initiative partners with Anthropic and OpenAI to provide access to AI tools for tens of thousands of students and thousands of teachers. And China is now requiring at least eight hours of AI education annually as early as elementary school, which goes a step beyond the new U.S. executive order. On the university level, Purdue University and the University of Pennsylvania have launched new master’s in AI programs, targeting future AI leaders.

Despite these efforts, these initiatives face an unclear and evolving understanding of AI literacy. They also face challenges to measuring effectiveness and minimal knowledge on what teaching approaches actually work. And there are long-standing issues with respect to equity − for example, reaching schools, communities, segments of the population and businesses that are stretched or under-resourced.

Next moves on AI literacy

Based on our research, experience as educators and collaboration with policymakers and technology companies, we think a few steps might be prudent.

Building AI literacy starts with recognizing it’s not just about tech: People also need to grasp the social and ethical sides of the technology. To see whether we’re getting there, we researchers and educators should use clear, reliable tests that track progress for different age groups and communities. Universities and companies can try out new teaching ideas first, then share what works through an independent hub. Educators, meanwhile, need proper training and resources, not just additional curricula, to bring AI into the classroom. And because opportunity isn’t spread evenly, partnerships that reach under-resourced schools and neighborhoods are essential so everyone can benefit.

Critically, achieving widespread AI literacy may be even harder than building digital and media literacy, so getting there will require serious investment – not cuts – to education and research.

There is widespread consensus that AI literacy is important, whether to boost AI trust and adoption or to empower citizens to challenge AI or shape its future. As with AI itself, we believe it’s important to approach AI literacy carefully, avoiding hype or an overly technical focus. The right approach can prepare students to become “active and responsible participants in the workforce of the future” and empower Americans to “thrive in an increasingly digital society,” as the AI literacy executive order calls for.

The Conversation will be hosting a free webinar on practical and safe use of AI with our tech editor and an AI expert on June 24 at 2pm ET/11am PT. Sign up to get your questions answered.

![]()

Funding from Google Research helped to support part of the authors’ research on AI literacy.

Funding from the German Federal Ministry of Education and Research under the funding code 16DHBKI051 helped to support part of the authors’ research on AI literacy.

Arne Bewersdorff does not work for, consult, own shares in or receive funding from any company or organization that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment.

– ref. AI literacy: What it is, what it isn’t, who needs it and why it’s hard to define – https://theconversation.com/ai-literacy-what-it-is-what-it-isnt-who-needs-it-and-why-its-hard-to-define-256061