Source: The Conversation – Africa – By Michele Van Eck, Associate professor in the School of Law at University of the Witwatersrand, who specialises in the areas of contracts, legal ethics and education. , University of the Witwatersrand

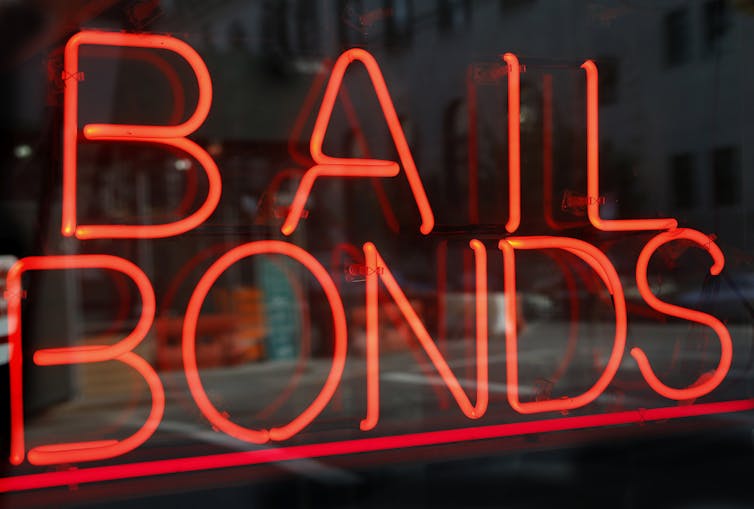

Education is widely regarded as the road to a better life. Yet the rising cost of tertiary education means many students can only go to university if they get financial aid, bursaries or loans.

South Africa’s National Student Financial Aid Scheme (NSFAS) offers students bursaries or loans which provide allowances for tuition and registration fees, books, travel and accommodation. But this type of funding applies only under specific and limited conditions. Many students fall outside its scope.

Students who are not enrolled for a qualification that is approved by the Department of Higher Education, or who wish to study for a second undergraduate qualification, or who are studying at private institutions, don’t qualify to get the funding.

The result is that many students can’t keep up with paying their university fees. In 2025 South African universities collectively held about R9.3 billion (US$528 million) in student debt that had remained unpaid since 2023.

Universities have been trying different methods to pressure students and graduates to pay outstanding student debts. This has included withholding of degree certificates, academic transcripts and marks.

Universities require funding to operate effectively, pay staff and maintain infrastructure. But withholding academic documents from indebted students may prevent them from securing employment – the very means by which they could repay their debts. These practices, while commercially defensible, often have the opposite effect. According to Unesco, “student loans generally have catastrophic effects for students and families across the world”.

It seems reasonable to conclude that student debt collection practices may entrench poverty and make it harder for graduates to get jobs.

From recent court cases, it appears that this issue is especially pronounced in the legal profession. Law graduates face additional scrutiny, as admission to the profession requires not only academic qualifications but also proof of moral character. The Legal Practice Act 28 of 2014 mandates that candidates be “fit and proper” individuals, embodying values such as honesty, integrity and reliability. Outstanding debt may be seen as a contrast to the values of honesty and integrity.

Fulfilling financial obligations can indeed have a bearing on ethics (a field I study as a legal scholar). But as I argue in a recent paper, it’s necessary to distinguish between graduates who are unwilling to pay and those who are genuinely unable to.

I also propose a couple of ways this could be achieved so that universities get their money and graduates get their start in working life.

How universities collect debt

Unlike South Africa, some countries have taken steps to deal with the impact of student debt.

My paper highlights that, in the United States, several states don’t allow universities and colleges to withhold degree certificates and transcripts (records of academic activity) over unpaid fees. They recognise that those debt-collection practices hinder employment and make inequality worse. Instead, they promote other strategies, like repayment plans related to income, or policies for how to treat students who are experiencing hardship.

In the United Kingdom, universities are advised not to use academic sanctions to recover non-academic debts, such as accommodation fees. Consumer protection laws treat students as consumers, allowing them to challenge unfair contractual terms. If a university’s contract includes provisions to withhold degrees for unpaid fees, students may contest these clauses as unjust.

South Africa lacks similar legal safeguards. Each university sets its own rules. These range from students not being able to graduate unless all fees are paid, to the withholding of certificates from students not in good financial standing, and even preventing students from viewing their examination scripts if they owe money. Some examples may be found at the University of the Free State (page 27), University of Pretoria (page 16) and University of the Witwatersrand.

Law students face additional hurdles

In the legal profession, financial responsibility is often tied to ethical conduct. Lawyers manage trust accounts, client funds and sensitive legal matters. Integrity is non-negotiable.

However, the inability to pay student debts is not inherently dishonest. Some students fall into debt due to circumstances beyond their control, like family obligations, socio-economic conditions, unemployment or the sheer cost of education.

South African courts have grappled with outstanding student debts when it comes to admitting law graduates to the profession. The courts’ approach has been inconsistent.

In Ex Parte Tlotlego the court emphasised that poverty should not bar entry into the legal profession. It said courts should not require proof of debt repayment arrangements, which would be unfair to students from disadvantaged backgrounds.

But in Ex Parte Makamu the court found that a law graduate must still demonstrate how they intend to settle their debts to satisfy the ethical standards of honesty and integrity.

More recently, Ex Parte Galela reinforced this view. The court declined the application for admission because it wasn’t clear why the law graduate hadn’t paid off their debt. It suggested that financial irresponsibility could reflect poorly on the graduate’s character.

The courts’ approach and general student debt-collection practices often fail to differentiate between students who cannot pay and those who choose not to. This distinction is vital. A student who ignores their debt without justification may raise ethical concerns. But a student who is willing to pay yet lacks the financial means should not be penalised.

Solutions

The solution lies in balancing the financial interests of universities with the socio-economic realities of students. Student debts must be repaid, but repayment mechanisms must also be fair and sustainable.

There have been attempts to find a solution, such as the draft Student Relief Bill, which proposes setting up a Student Debt Relief Fund. But that might place unsustainable pressure on the economy.

I have another proposal: allowing graduates to receive their degree certificates regardless of outstanding debt, along with two legislative interventions. These are:

-

Automatic garnishee orders: upon graduation, an automatic garnishee order (a court order directing an employer to deduct a certain amount from an employee’s income) could be placed on future salaries of a graduate. This would ensure that student debt is repaid over time.

-

Amendment to the Prescription Act 68 of 1969: This could exclude student debt from prescribing (becoming too old to collect). Normally, such a debt would prescribe after three years. An amendment would allow universities to recover debts for the duration of graduates’ employment, not just within three years.

These measures would uphold the financial sustainability of universities while protecting the dignity and future employment prospects of graduates.

![]()

Michele Van Eck does not work for, consult, own shares in or receive funding from any company or organisation that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment.

– ref. South Africa’s student debt trap: two options that could help resolve the problem – https://theconversation.com/south-africas-student-debt-trap-two-options-that-could-help-resolve-the-problem-262555